Hello everyone,

Weird to see this new forum… but anyway the new version of TrueNAS has changed the way it allocates the memory for ARC and I would like to start another discussion on the matter to try to figure out a solution to this major problem.

You see this specific machine I moved away from Proxmox because it had a major problem when the root filesystem just filled up from over-provisioning (which shouldn’t even happen to begin with) and I could not repair the root filesystem, I tried to free up space to restore everything just wouldn’t happen, and since most of what that machine did was a storage server I figured I would move everything from that machine to TrueNAS Scale which is just as competent as a hypervisor for what I need to do.

The two major problems I’m having are, first the fact I just had to free up one pcie slot and order a quadro p620 so I can have that as the system GPU and then be able to passthrough my radeon pro w6600 to a windows vm, still waiting on that gpu but with proxmox i was able to simply passthrough that single gpu to the vm and run headless. I do think that this should absolutely be an option and there is no reason to ‘waste’ a gpu and a valuable pcie slot on simply running nothing but a text UI that will never be used unless something were to ever go wrong (which you can still override and take the gpu back in that situation, ideally with a boot menu entry to minimise the effort).

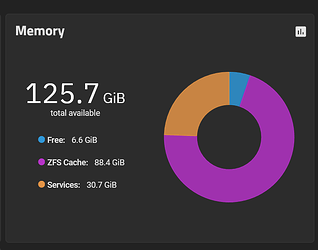

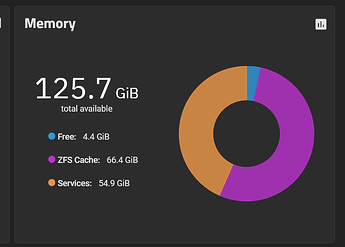

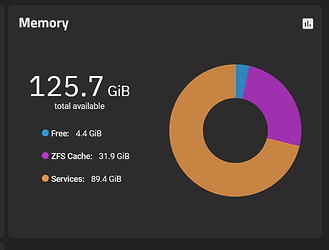

The second major problem i’m having is if I start all the VMs on boot, I get all my 128GB of memory minus whatever the system uses available to me, then the vms start and all that free memory remains available.

But then if i start to use the system the ARC cache will eat ALL the available free ram and if i were to say want to create and start another VM as my needs would change or as i’m still setting them up one by one i cannot unless i tell it that i understand the risks and FORCE the vm to start which depending on how much memory is available is likely to lead to a full crash.

I also cannot simply stop a vm to change something and then start again, because if i do that the ARC cache will happily eat all of the memory the VM was using before I stopped it.

Now from online research it seems that people complained in the past ARC was only using the 50% or so of system memory and now it’s just eating up as much as it can. Now this memory is being used because I have also SMB activity in the background such as downloads writing things to disk.

I have seen some overrides but also people mentioning that it no longer applies because the system will override automatically when you add more vms but it doesn’t seem to be working as it should.

The behaviour i would like to see is ideally have a new menu on the left side of the web ui where you can manually allocate and control the ARC memory as in, if you need to free up memory should be just one button, limit to xxGB and click here to immediately free up or slowly (if it needs to write that data to disk first) and give me a progress bar, and after that the memory will be untouched/reserved so i can start vms with it and stop etc and all the VMs will be able to run without being affected by ARC and ARC will have access to, almost, all the rest of the memory of the system but with some manual control, the automatic behaviour can still be as is now because I absolutely understand why as a primarily file server it would want to use up all the RAM for ARC, but then people have to run their own vms and containers and we all have our budget limitations on how many servers and OSes we can have so flexibility here is always welcome.

Thanks!