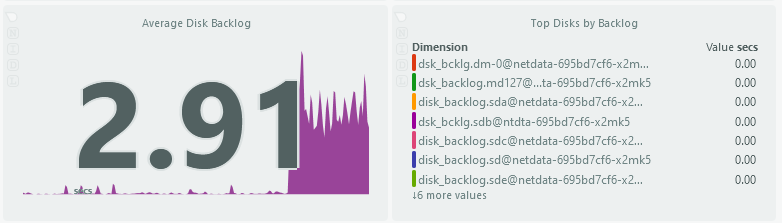

I’m having some issues lately with “disk backlog” making vms/containers/UI slow down to a crawl until the backlog is cleared. I’ve monitored with iotop and netdata, and I cannot pin down what the cause is. Can anyone provide any insight into disk backlog, causes, remedies, etc.?

If you’re on 24.04.0 it could be related to this:

I’m on Cobia 23.10.2

Maybe provide some more details of your setup?

How full is your pool? What is it composed of? What is its layout?

Etc

Info on hardware/layout in signature. The backlog occurs mostly on a mirrored SSD pool dedicated to VMs. That pool is 52% capacity. In any case, have you ever dealth with disk backlog before?

No idea what it is.

Thought it might be IOwait. Or related to the pool being over full.

So, I suspect something is dribbling writes to your pool.

IOWait appears to be directly related to disk backlog. However, iowait from what I’ve read isn’t a great metric to use. All processors fill up with white bars in htop (iowait) when this is happening.

I decided to TRIM the SSD, which I always kept auto-trim disabled as per the defaults. At first glance, this appears to have alleviated the disk backlog, but it will take more time to see if this persists.

I have only used trim ONCE before a few months ago. I kept reading conflicting information on the need to use it, and due to it being default to off, I never bothered with it much.

I will report back with my findings in the future.

After 48 hours I haven’t seen much of an issue with disk backlog anymore. The SSD drives for my boot and VM pools needed a TRIM. I’ve now enabled a weekly CRON TRIM for both.