Hello all, I am new to TrueNAS, and recently built my first home server. The components are as follows:

TrueNAS-SCALE-23.10.2

Mobo: Asus Z170-AR

CPU: i5-6600k

RAM: 2x8Gb 3200mhz G.Skill Ripjaws

Storage:

4 x HGST Ultrastar HUH728080ALE604 8TB 7200RPM SATA 6Gb/s (Configured in RAIDZ1)

2 x 256Gb SATA SSDs, 1 used for boot and 1 used for apps

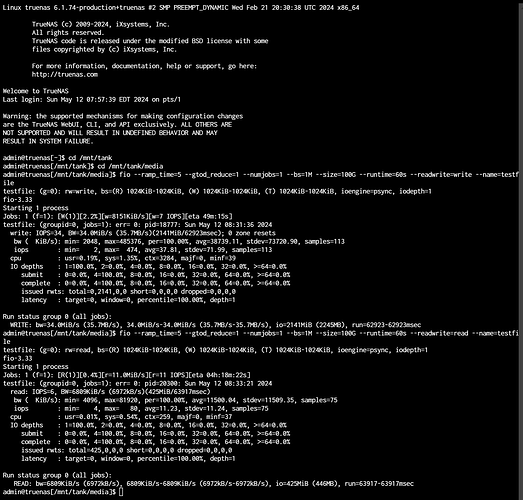

The performance feels incredibly slow, with only 34Mbps writes, and 6Mbps reads. I am not sure what is wrong or where to begin.

See image of FIO test for reads and writes.

I would appreciate any help on this!

I am by no means an expert- I am new to TrueNAS too- but my understanding is that ZFS needs a fair amount of ram to be performant. 16 gb I think is the minimum.

Raidz1 is also not massively performant, if you need more performance, try two vdevs of mirrored disks if you need more speed & iops.

Do you have an fio test of these drives outside of zfs/truenas (ie: ext4) that you can compare them to?

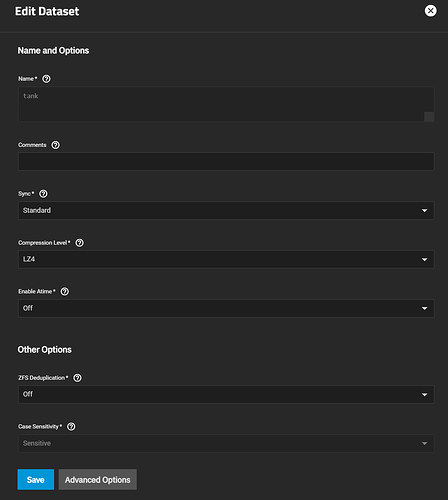

What record size is your dataset set at? Recordsizes can have a huge performance implication.

Do you have dedup (hopefully not with only 16 gb of ram!) or compression turned on?

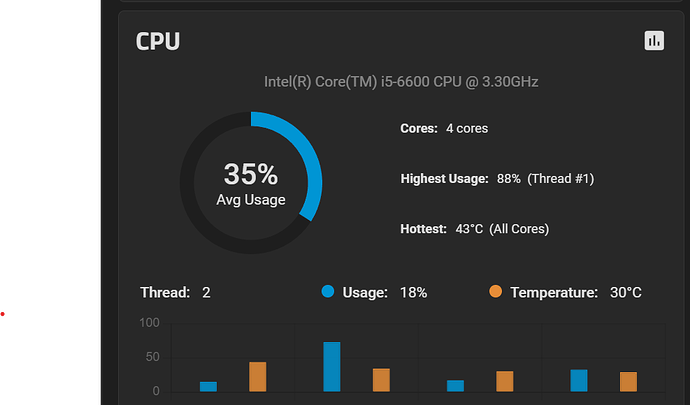

What’s CPU usage, other htop metrics reporting during load testing?

Hello, thanks for the tips! I bought these drives specifically for this build, so I don’t have more data on them. My record size is set at 1M, and compression was left to default level LZ4.

The CPU usage bounces all around, between 35 and 75%, with single thread between 60 and 90%.

Cool. In my unprofessional opinion, I’d start by disabling compression and seeing how it does with that disabled. That 6th gen i5 might not be powerful enough to handle lz4 in addition to the zfs overhead.

As an experiment it might be fun, but with modern CPUs, there’s almost no reason to disable inline compression, even if the files on the dataset are mostly incompressible.

- For LZ4, there is early abort

- For LZ4 (default) and ZLE the overhead is almost nothing

- Any inline compression enabled will remove the extraneous padding at the end of a file’s last block

- Blocks that are compressed are pulled faster from disk

- Even seemingly incompressible files may contain compressible sections, such as metadata or runs-of-zeros somewhere in the middle

2 Likes

Are these sequential read/writes? I have trouble reading screenshots.

Thank you for the information! These results are sequential from a 60-second test of 1MB writes, using FIO. Hope this answers your question!

How much free space is there on your pool?

What does your RAM usage look like?

Do your apps read/write to the storage pool or only on their dedicated ssd?