Hello,

I seem to be having an issue where my snapshots are only maintained for an arbitrary amount of time (I think 1 week) even with a 1 month retention period. my only remaining guess as of now is that replication is somehow deleting them.

When the snapshots are deleted in the HDD mirror, it causes the next replication to fail due to not having an incremental base on dataset.

All of my daily snapshots on the mirror pool are retained for the correct amount of time, it’s just the weekly snapshots that are deleted, any snapshots transferred to the archive pool are maintained.

I have tried quite a few different changes/tweaks to the setup but it seems to be consistently deleting ever since I moved to my new HDD mirror method, I never had any issues when directly replicating from the SSD to the Archive drive, I do it this way because the Archive drives are loud and I want them to idle as much as possible.

My current setup is listed below (hopefully enough info, if not I’m happy to post more) hopefully I’m doing something stupid ![]() , any help is greatly appreciated.

, any help is greatly appreciated.

Thanks,

Josh

Truenas version:

Community 25.04.1 (but this has been an issue for 2 months, so multiple versions)

My current setup is as follows:

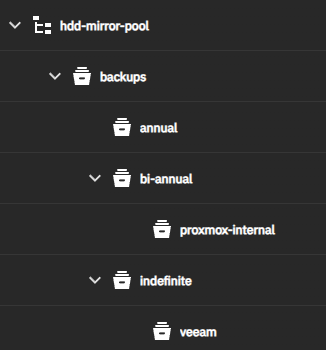

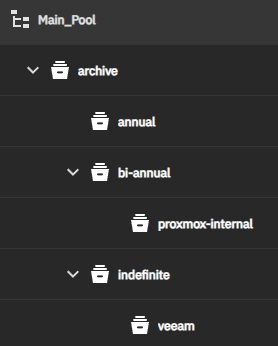

SSD Daily snapshot → (Weekly replication) → HDD mirror:

→ (Monthly replication) → HDD RaidZ1 archive

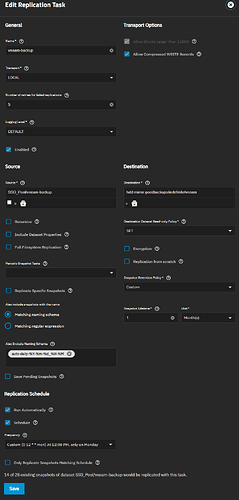

I’m going to use Veeam as the example, however, proxmox uses the same exact method just with a different final retention.

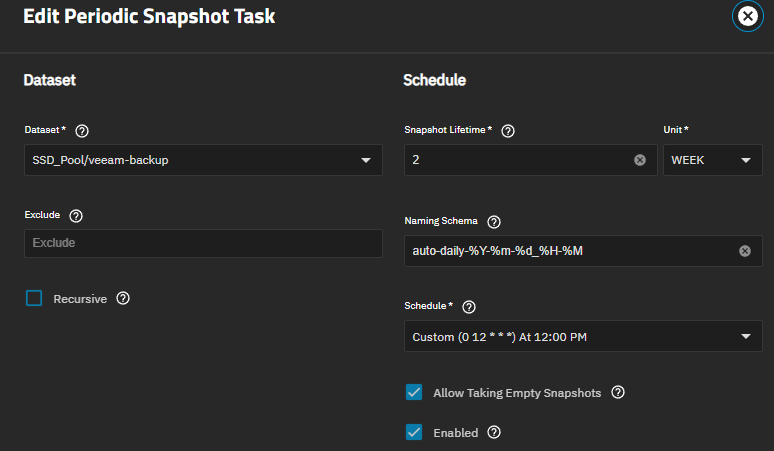

First step - Snapshot SSD:

Second step - Replicate to specific HDD mirror dataset:

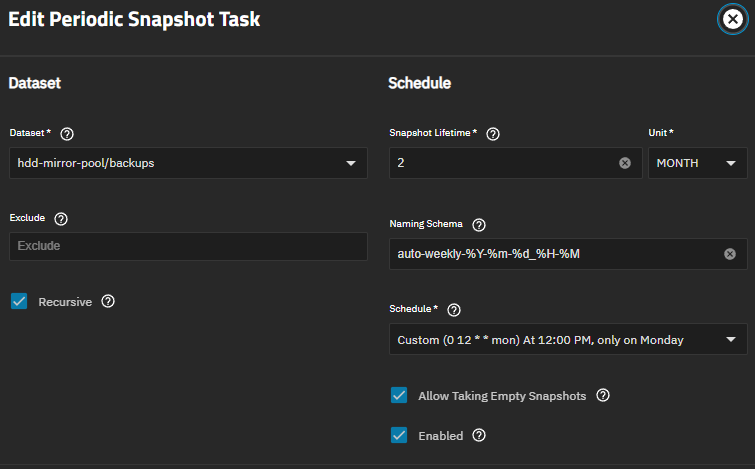

Third step - Recursive snapshot HDD mirror:

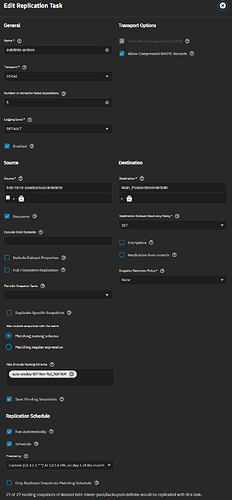

Fourth step - Replicate to specific Archive dataset: