I’m experiencing slow SMB read speed from Windows 11 client with TrueNAS SCALE 24.10.2 over 10gbe connection.

I really hope someone give me a hand here as I’m completely lost what else can I change or try.

My setup :

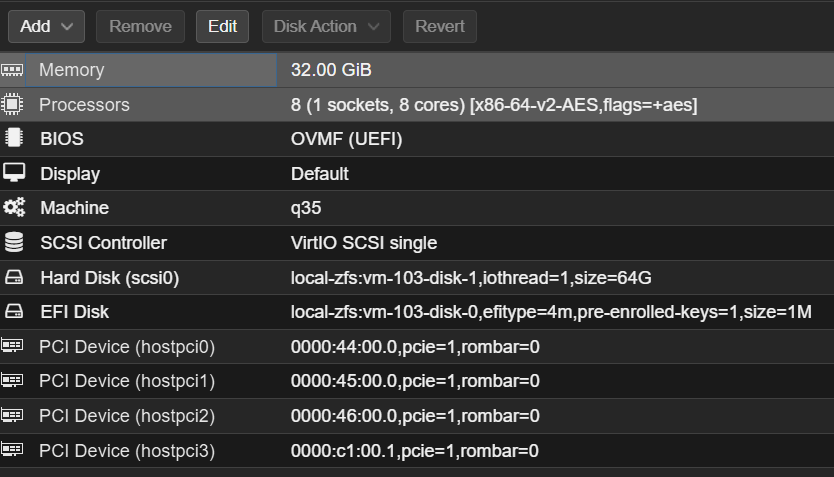

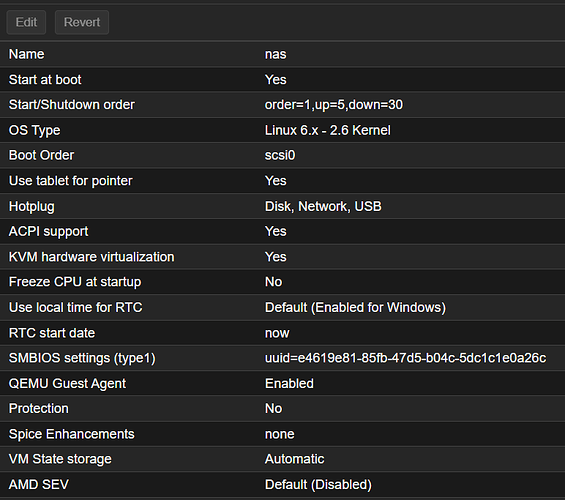

VM TrueNAS Scale 24.10.2 in Proxmox (q35, VirtIO SCSI single)

8 cores of Epyc 7402

96GB RAM to TrueNAS

2 x NVMe PM983 PCIe passthrough

1 x Mellanox ConnectX 4-Lx 25gbpe PCIe passthrough

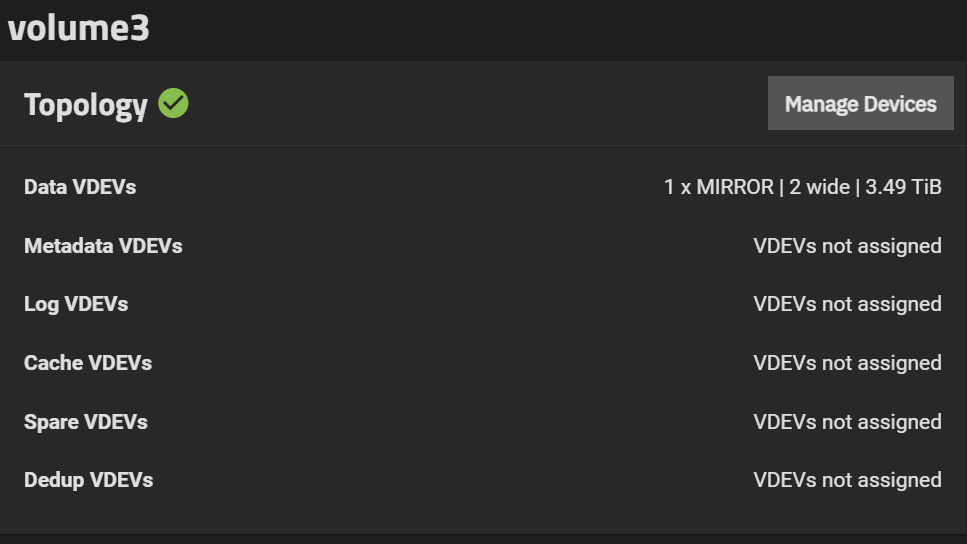

I have ZFS mirror 2 wide with 2 x PM983 exported as SMB Multichannel share

The problem :

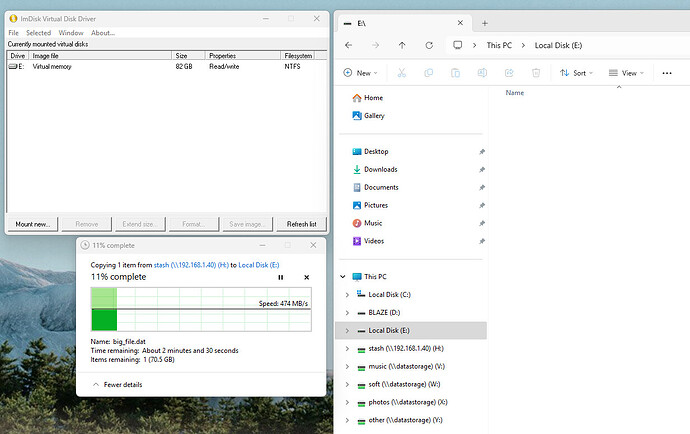

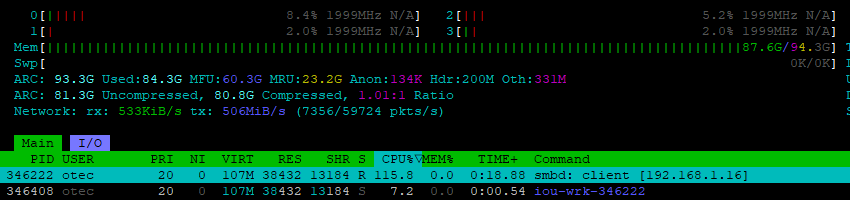

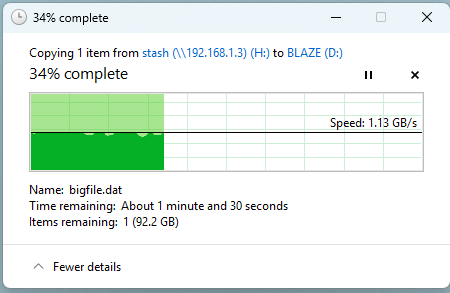

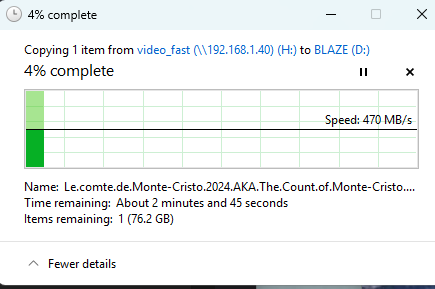

I get ~450MB/s read speed from that share in TrueNAS.

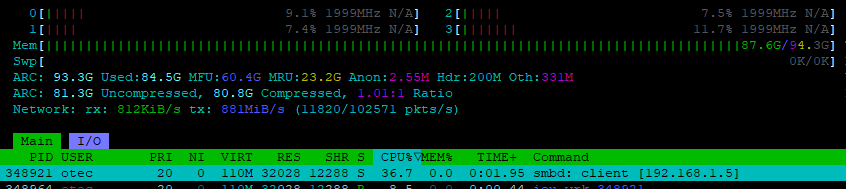

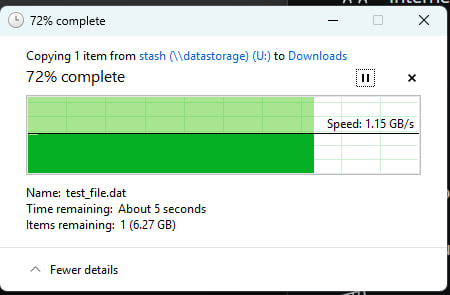

I get ~1.15GB/s read speed from Ubuntu based LVM raid-1 with Exos X18 SATA spin-disks + NVMe read cache in front.

(see screenshots below)

TrueNAS 24.10.2 runs Samba 4.20 that is pretty modern. It recognizes RSS support and reads interface speed correctly, but regardless of that I also tried explicit options in cli

interfaces = "x.x.x.x;capabilities=RSS,speed=25....lots of zeros" via service smb update smb_options= with subsequent smbd restart

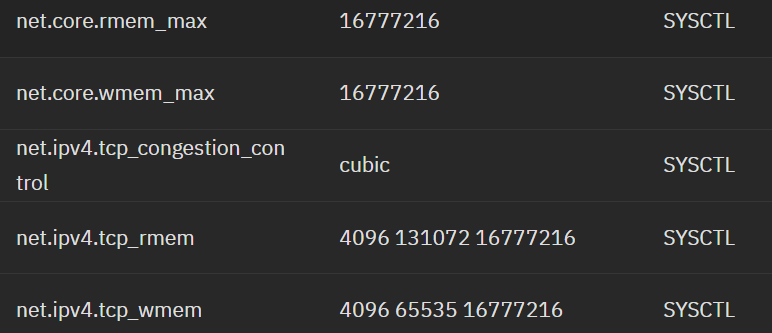

also tried these options (altho as I read, they are deprecated settings in modern Samba)

aio read size = 1 or 16 * 1024

use sendfile = yesZFS mirror is fast, fio for sequential reads reports 6.6GB/s

# fio --name=fio_test --ioengine=libaio --iodepth=16 --direct=1 --thread --rw=read --size=1G --bs=4M --numjobs=1 --time_based --runtime=30

Run status group 0 (all jobs):

READ: bw=4365MiB/s (4577MB/s), 4365MiB/s-4365MiB/s (4577MB/s-4577MB/s), io=128GiB (137GB), run=30001-30001msecClient to TrueNAS network handles 10gbps easilym, iperf3 stats :

Accepted connection from 192.168.1.16, port 60898

[ 5] local 192.168.1.40 port 5201 connected to 192.168.1.16 port 60899

[ 8] local 192.168.1.40 port 5201 connected to 192.168.1.16 port 60900

[ 10] local 192.168.1.40 port 5201 connected to 192.168.1.16 port 60901

[ 12] local 192.168.1.40 port 5201 connected to 192.168.1.16 port 60902

[ 5] 0.00-1.00 sec 299 MBytes 2.51 Gbits/sec

[ 8] 0.00-1.00 sec 299 MBytes 2.51 Gbits/sec

[ 10] 0.00-1.00 sec 292 MBytes 2.45 Gbits/sec

[ 12] 0.00-1.00 sec 292 MBytes 2.45 Gbits/sec

[SUM] 0.00-1.00 sec 1.15 GBytes 9.91 Gbits/secSuper basic setup as you can see, everything is fast and supposed to be fast for a client

But it is not, the max I get copying files from SMB share is 470mb/s :

Client - Windows 11 Pro (Mellanox ConnectX 4-Lx that runs at 10gbps)

SMB multichannel is 100% enabled and used (I even can confirm this by looking at SMB2 negotiation packets in Wireshark. Server offers multichannel and client accepts it)

Windows client :

PS C:\Windows\System32> Get-SmbConnection

ServerName ShareName UserName Credential Dialect NumOpens

---------- --------- -------- ---------- ------- --------

192.168.1.40 video_fast KORESH\admin KORESH\otec 3.1.1 2

PS C:\Windows\System32> Get-SmbMultichannelConnection

Server Name Selected Client IP Server IP Client Interface Index Server Interface Index Client RSS Capable Client RDMA Capable

----------- -------- --------- --------- ---------------------- ---------------------- ------------------ -------------------

192.168.1.40 True 192.168.1.16 192.168.1.40 6 2 True FalseThat’s it. I tried numerous sysctl changes - but no point as iperf3 shows perfect saturation of 10gbps channel.

As I mentioned before, same Windows 11 client reads 1.15GB/s from Ubuntu based NAS that runs Samba 4.15 on 1x10gbe RJ45 Marvell AQtion network.

Tests are 100% reproducible.

I did try TrueNAS Scale version 22 and even nightly 25.10 but speed remains the same.

TrueNAS runs inside Proxmox but Ubuntu NAS is bare metal but I don’t think it affects anything.

FIO and Iperf3 that I run on TrueNAS VM report excellent speeds, NVMe disks and NIC are PCIe passthrough into VM.

Am I missing something or Samba in TrueNAS is somehow not properly build ?

I’m thinking to compile Samba from sources myself but haven’t had time to figure all dev dependencies on TrueNAS.