In another thread, I mentioned upgrading my TrueNAS Scale system 25.04.1 to a newer motherboard and faster processor (an i7-14700k) processor. The motherboard comes with three NVMe slots, of which one is a boot drive.

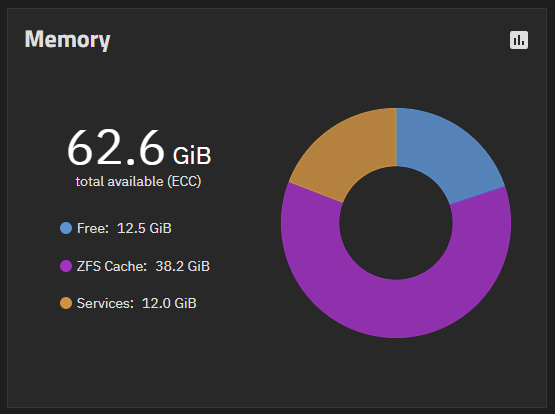

When I look at the current memory usage, I see the following:

The motherboard is capable of 192GB ECC memory across four slots, but unfortunately 96GB (48GB×2) ECC is extremely expensive compared to 64GB (32GB×2) ECC. I am planning on adding another 64GB by the end of the year.

My VDEV configuration is as follows:

One idea I’m toying with is using one of the NVMe slots for a cheap 500GB drive to use as a L2ARC cache just to say that I’ve done it. One suggestion that I see in other threads is to look at the arc_summary report which shows the following:

ARC status:

Total memory size: 62.6 GiB

Min target size: 3.1 % 2.0 GiB

Max target size: 98.4 % 61.6 GiB

Target size (adaptive): 62.0 % 38.2 GiB

Current size: 62.0 % 38.2 GiB

Free memory size: 9.6 GiB

Available memory size: 7.4 GiB

ARC structural breakdown (current size): 38.2 GiB

Compressed size: 88.6 % 33.8 GiB

Overhead size: 6.6 % 2.5 GiB

Bonus size: 0.8 % 323.6 MiB

Dnode size: 2.5 % 990.2 MiB

Dbuf size: 1.0 % 408.3 MiB

Header size: 0.3 % 135.9 MiB

L2 header size: 0.0 % 0 Bytes

ABD chunk waste size: 0.1 % 21.9 MiB

ARC types breakdown (compressed + overhead): 36.3 GiB

Data size: 93.9 % 34.1 GiB

Metadata size: 6.1 % 2.2 GiB

ARC states breakdown (compressed + overhead): 36.3 GiB

Anonymous data size: < 0.1 % 132.0 KiB

Anonymous metadata size: < 0.1 % 2.1 MiB

MFU data target: 12.2 % 4.5 GiB

MFU data size: 13.1 % 4.8 GiB

MFU evictable data size: 10.8 % 3.9 GiB

MFU ghost data size: 10.8 GiB

MFU metadata target: 10.2 % 3.7 GiB

MFU metadata size: 4.3 % 1.6 GiB

MFU evictable metadata size: 0.6 % 216.1 MiB

MFU ghost metadata size: 0 Bytes

MRU data target: 67.3 % 24.5 GiB

MRU data size: 80.8 % 29.4 GiB

MRU evictable data size: 78.6 % 28.6 GiB

MRU ghost data size: 3.5 GiB

MRU metadata target: 10.2 % 3.7 GiB

MRU metadata size: 1.8 % 655.4 MiB

MRU evictable metadata size: 0.5 % 173.3 MiB

MRU ghost metadata size: 0 Bytes

Uncached data size: 0.0 % 0 Bytes

Uncached metadata size: 0.0 % 0 Bytes

ARC hash breakdown:

Elements: 580.0k

Collisions: 1.7M

Chain max: 4

Chains: 19.1k

ARC misc:

Memory throttles: 0

Memory direct reclaims: 71199

Memory indirect reclaims: 1841239

Deleted: 13.0M

Mutex misses: 391

Eviction skips: 8.1k

Eviction skips due to L2 writes: 0

L2 cached evictions: 0 Bytes

L2 eligible evictions: 1.6 TiB

L2 eligible MFU evictions: 5.2 % 84.1 GiB

L2 eligible MRU evictions: 94.8 % 1.5 TiB

L2 ineligible evictions: 6.2 GiB

ARC total accesses: 581.9M

Total hits: 99.2 % 577.4M

Total I/O hits: < 0.1 % 123.4k

Total misses: 0.8 % 4.4M

ARC demand data accesses: 43.6 % 253.6M

Demand data hits: 99.9 % 253.3M

Demand data I/O hits: < 0.1 % 39.7k

Demand data misses: 0.1 % 243.3k

ARC demand metadata accesses: 55.6 % 323.3M

Demand metadata hits: 100.0 % 323.2M

Demand metadata I/O hits: < 0.1 % 17.0k

Demand metadata misses: < 0.1 % 104.0k

ARC prefetch data accesses: 0.8 % 4.9M

Prefetch data hits: 17.5 % 853.7k

Prefetch data I/O hits: 0.1 % 6.8k

Prefetch data misses: 82.4 % 4.0M

ARC prefetch metadata accesses: < 0.1 % 244.8k

Prefetch metadata hits: 42.0 % 102.8k

Prefetch metadata I/O hits: 24.5 % 59.9k

Prefetch metadata misses: 33.6 % 82.1k

ARC predictive prefetches: 99.7 % 5.1M

Demand hits after predictive: 93.2 % 4.8M

Demand I/O hits after predictive: 0.9 % 46.2k

Never demanded after predictive: 5.9 % 302.7k

ARC prescient prefetches: 0.3 % 15.2k

Demand hits after prescient: 97.6 % 14.8k

Demand I/O hits after prescient: 1.7 % 264

Never demanded after prescient: 0.7 % 102

ARC states hits of all accesses:

Most frequently used (MFU): 95.6 % 556.3M

Most recently used (MRU): 3.6 % 21.1M

Most frequently used (MFU) ghost: < 0.1 % 26.4k

Most recently used (MRU) ghost: < 0.1 % 100.7k

Uncached: 0.0 % 0

DMU predictive prefetcher calls: 124.8M

Stream hits: 33.0 % 41.2M

Hits ahead of stream: 7.8 % 9.7M

Hits behind stream: 29.9 % 37.4M

Stream misses: 29.3 % 36.6M

Streams limit reached: 46.6 % 17.1M

Stream strides: 809.6k

Prefetches issued 4.3M

If I’m reading this correctly, then I probably won’t benefit from adding a NVMe drive as a Cache VDEV. But, if I did that, I would just install the drive and assigning it to Cache VDEV. Is that correct?