Kris and Chris are back from some travel to bring you another episode of TrueNAS Tech Talk! On today’s episode, RC1 will be launching next week, we’ll dig deeper into the containers vs VMs discussion from last week, and answer a couple of viewer questions we received this week on Incus data backup and how to change the compression algorithm on existing data.

Enjoyed the video, as always! In regards to your Home Assistant mention, there’s a lengthy comment below ![]() . (not speaking “officially” in any way though)

. (not speaking “officially” in any way though)

For some parts of Home Assistant and addons to work correctly, it’s kind of crucial that Home Assistant has its own full OS, as the kernel configuration can be set exactly like needed and easily modified in a new OS release.

For example, (Matter over) Thread devices use (link local) IPv6 addresses and there’s a kernel patch in place for that (IPv6 reachability probing). The kernel parameters are also set carefully for Thread to work correctly (now).

I remember that some parameters needed tweaking with multicast forwarding and IPv6 neighbor discover protocol for Thread Border Routers as well.

Like, there’s a lot to Home Assistant OS itself already. You might be able to do a lot with an LXC container, but probably not everything. And I’m not sure it’s possible to maintain another supported way of setting up Home Assistant in a clean and easy fashion, mostly just to avoid setting up a VM.

As mentioned later in the video, the performance overhead from one (or a couple) VMs also isn’t that high. And I imagine you’d just run one HA VM anyway.

Coming back to the Thread example, you might not need a lot of these patches/tweaks in Home Assistant OS now, but maybe you want to run a Matter over Thread device with multiple third-party Thread Border Routers in your network at some point (i.e. just have multiple Apple TVs).

Then, update/reboot an Apple TV and realize that Home Assistant can no longer communicate with your Thread device due to the routes not failing over because of the missing IPv6 reachability patch. Both as an end-user and developer, it might be hard to even figure out that the issue is with the underlying OS in that case. These issues have happened and were fixed in HA OS.

Also, the Home Assistant addons (called “plugins” in the video) are mostly just Docker containers. You might be able to set them up as individual Docker containers on another OS yourself, but I really wouldn’t recommend this and there have been issues with users setting up HA Core and “addons” as Docker containers themselves (e.g. with multicast, again).

There are four officially supported installation types of Home Assistant at the moment:

- Home Assistant OS (recommended, generally works well with good UX)

- Home Assistant Container (run OS in a Docker, used inside of HA OS)

- Home Assistant Supervised (similar to HA OS, but with your own OS)

- Home Assistant Core (installing Core directly in a Python virtual environment)

Dropping support for HA Supervised (3.) and running HA Core directly in a venv (4.) is currently being discussed in the HA architecture repo due to multiple reasons, including that few users run these installation types (see analytics.home-assistant.io*) and because they cause a lot of unnecessary maintenance and support overhead.

They just cause a good number of issues, that would never happen on HA OS, which takes up quite a bit of free time from maintainers.

Now, introducing a mix of HA OS and Supervised (1. and 2.) seems like it might just cause more trouble than it’s worth.

I’d guess the majority of HA users don’t really care that they have to set up one VM, instead of an LXC container (or might not even know what an LXC container is).

Maybe there’ll be a community supported LXC container with HA OS like features soon, but speaking for myself, I just don’t see the possibility of an officially supported LXC container (with the full “Supervised/Docker management addons” part) anytime soon.

For everything HA related to mostly “just work”, HA OS is really the way to go, both from a user and maintainer perspective.

Home Assistant might also be one of the few applications that you’d want a separate system for, e.g. if you rely on it for your house lights and switches (to not have downtime during TrueNAS/Proxmox/… updates, server hardware upgrades, or when you have to migrate VMs in Fangtooth ![]() ). On that note, I’ve been very happy with Fangtooth so far!

). On that note, I’ve been very happy with Fangtooth so far!

If HA fails to start after OS updates for some reason, HA OS should automatically switch back to the other boot slot with the older OS version, so it should be fine to run headless on a physical machine (without an attached monitor/KVM).

I’m running HA OS in a TrueNAS hosted VM myself, but you can still connect a separate HA OS machine to your TrueNAS system for HA backups via SMB.

You can also do that with a HA OS VM in TrueNAS if you don’t want to rely on ZFS snapshots or just want to easily rollback parts (HA Core or specific addons) of Home Assistant, directly from the Home Assistant UI.

And for just taking a backup every week, the SMB and network overhead from the VM is not that critical.

*: A note for HA analytics, only look at the percentage of users each and kind of ignore the actual numbers. Due to HA analytics being entirely opt-in, the majority of people have them disabled (actual numbers are probably at least three times higher).

Very informative Tech Talk. You really pointed out key differences between Docker, LXC, and KVM, very valuable.

Great video as always guys. My question is around compression. I get the feeling that in many aspects ZSTD is superior to LZ4 (correct me if I’m wrong) but if so why does LZ4 still remain the default in TrueNAS and could we expect this to change in future versions?

LZ4 is insanely fast, while able to save some space on “compressible” files. It will even compress the extraneous null bytes on the last record of a file, which is especially important for datasets with a large recordsize.

Ever since OpenZFS 2.2.x, I use ZSTD-9 for everything, except for multimedia datasets and other exceptions. For those, I stick with LZ4.

I had considered switching to ZSTD-7 as a sweet spot for speed and savings, but I haven’t noticed any performance issues for my casual use. The ZSTD “early abort” feature introduced in OpenZFS 2.2.x is one of my favorite improvements of ZFS.

EDIT:

TL;DR: LZ4 makes sense as the default, no matter how much improvement we’ve seen with ZSTD and ZSTD “early abort”.

Large record size 1M+?

Yes.

With ZFS, no matter what the recordsize is set to, if a file’s size does not exceed it, then it will be saved as a special “single block” that is sized to the nearest binary jump. (i.e, 32K → 64K → 128K → 256K → 512K, and etc)

This means that even with a recordsize set to “1M”, a file that is 200K in size will be saved as a 256K block, not a 1M block. (This doesn’t even factor compression, which will further shrink the block on the storage drive.)

If the file cannot be saved as a single block that is less than or equal to the 1M recordsize? Then every block that comprises the file will be 1M, including the last block, which could be very wasteful without any compression. The last block might only hold a few KB of actual file data, yet wastes 996KB of padding with “null bytes”. However, this is where using any compression will remove the wasted space at the end of a file’s last block.

That’s why I always recommend to use LZ4 (or LZE) at minimum, even for multimedia datasets.

@@@@@@@@@@@@@@@@kris and @@@@@HoneyBadger, you laid out the differences and benefits of LXC (“Linux containers”) vs Docker quite well. ![]()

I want to add one more, which is often overlooked, since it appeals more for “power users”.

A full OS “jail” gives you access to more software, which might not be popular enough to offer a dedicated drop-in (via Docker), as well as giving you an environment to compile software that’s not even available in a distro’s package manager. It also allows you to grab (or patch) software that is from a forked GitHub.

To give an example: Without a “jail”, I wouldn’t be able to use the Wayback Machine Downloader with much success.

- The one on

hub.docker.comis 7 years old, and no longer works properly. - The upstream code suffers a crippling bug, and has since been abandoned.

- A package is not available on most distros, and even if it is, it’s using the severely outdated code.

- The same issue exists for the ArchLinux AUR.

Thankfully, someone forked the code, updated it, and patched it. In a “jail”, I can use this working version, since I have access to a legit OS and filesystem.

Having to rely strictly on Docker, I wouldn’t be able to use the software.

Someone might say, “Just use a VM”. To me, that’s too much overhead for a small command-line utility… No thanks. I’ll stick with jails and then later, LXC containers.

Well. Not strictly true, you would have to create a docker file or two.

And in this case, setting up a jail/lxc is easier than learning how to create a multi-stage docker build.

The docker file basically codifies the same steps you would use to install the software in a jail… but in a docker base image.

A multi-stage build could be used to build the software so that only the binary is included in the final

Docker image.

So, similar to jail setup scripts, but an industry standard, and with optimized layering.

At that point, like you said, I’d already have made a jail and installed the software by then.

Which reminds me of another benefit of jails (and LXC?): One jail can house multiple softwares with a “similar purpose” that all use the same (non-abstracted) mountpoints, with the same dedicated IP address.

In my example with Wayback Machine Downloader, it’s not the only thing installed in this jail.

I have a collection of tools in my general purpose jail, which I give access to relevant datasets:

- yt-dlp, for downloading and archiving videos and metadata from different platforms

- gallery-dl, for downloading and archiving galleries and metadata from different platforms

- wget, as a universal downloader

- httrack, as a webpage and website archiver (I might replace it with something more modern)

- wayback_machine_downloader, for saving local copies of archives and snapshots from Archive.org

- aria2, as a general purpose downloader, which also supports torrents, without the need to use my qBittorrent jail

- ffmpeg, universal encoder for converting multimedia in bulk, unattended

- a bunch of other tools, for metadata and file management

Either SSH into the jail’s IP or enter it from the TrueNAS host, and off I go. ![]()

Yeah. It’s as if it is a lightweight virtual machine.

Good point @winnielinnie, I could have probably expounded on that aspect of LXC a bit more. But to Stux point, learning how to use Dockerfile is sometimes also a valid alternative. I’ve done that for a couple of things now, and found it to be a very clean way to re-package something into docker format using more or less the same (reproducible) build process I would have used with a LXC instance.

I think I’ve ranted on this before, but moving to Linux meant we went from slim options for “how to do things” to now almost too many to pick from at times. I’ve learned to embrace the diversity, since it means we just have more choices to pick a tool that best fits the specifics of the use-case. LXC and hand-rolled Docker both have places where they fit best ![]()

… especially if the docker compose files for Apps still point to TrueCharts repositories… (cough, cough). See home Assistant, Frigate, among others in 24.10. ![]()

Where are you seeing that? I did a quick check of Frigate and home-assistant apps here, I don’t see any truecharts linkage anywhere in the files or repo at large.

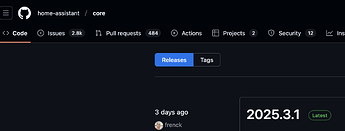

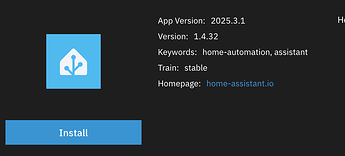

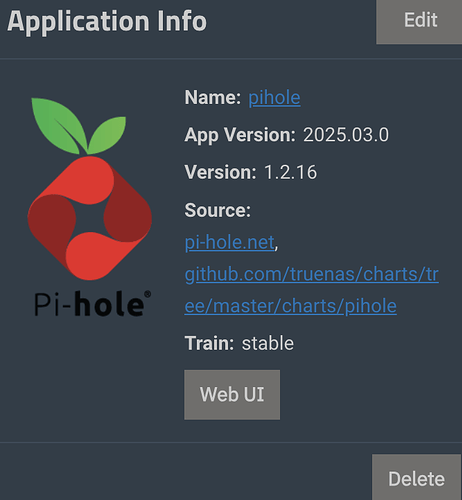

Apologies, but as of yesterday, the Apps still pointed to /charts/ repositories for Home Assistant, pi-hole, and Frigate with versions that didn’t reflect active versions (ie 2025-03 vs. v6.05 for pi-hole, for example). IIRC, @HoneyBadger told me that these repositories had to be updated?

Perhaps that happened for Fangtooth but not 24.10.2? Or maybe I need to update my apps?

Ok, that is the confusion. We listed truenas/charts links in the sources section (As in they were ported from our K8’s apps originally). But that has zero to do with the TrueCharts repos.

Those sources were just a historical reference marker and had zero functionality. We’re removing those now since we don’t want to confuse folks who see the word “charts” and get confused ![]()

FWIW, I got confused because the versions listed for the underlying projects had nothing to do with the actual current versions. It would be great if the version being shown reflects the actual version of the project, not an arbitrary date stamp.

I want to take another run at implementing these Apps once I have made the transition to Fangtooth because I much prefer having the ability to assign IP addresses to each App vs. using a proxy or whatever.

But first I have to finish an interrupted replication to a remote NAS that will take the whole week to complete.

FWIW, I have had little success with the resume feature that ZFS send allegedly has as of 24.04. Instead of resuming where a stream was cut off, the whole replication that was interrupted has to be redone (ie all of time-machine@auto2025-03-08 has to be retransmitted, for example, even if 99% of that snapshot had previously been sent to the remote machine).

I’m not sure what you mean by saying arbitrary date stamp.

For example HA releases are using date stamp

Which is what we also show as App Version

Can you share examples where the App Version is not following the upstream versions?

Note that for your installed apps it will display the version you have currently installed. Once you update them it will display the updated version…

I’d have to be home with my NAS to confirm but IIRC, neither version listed for pihole was 6.0x or in the 5.x range. Hence my confusion.

I also misunderstood @HoneyBadger when he mentioned “cruft” re versions being shown for pi-hole.

Ahh, ok I see. Pi-hole docker edition has a different version scheme compared to pi-hole (main software):

No wonder it is confusing ![]()