Good day,

For about 3 years now, I’ve had my own server at home, and everything runs pretty flawlessly.

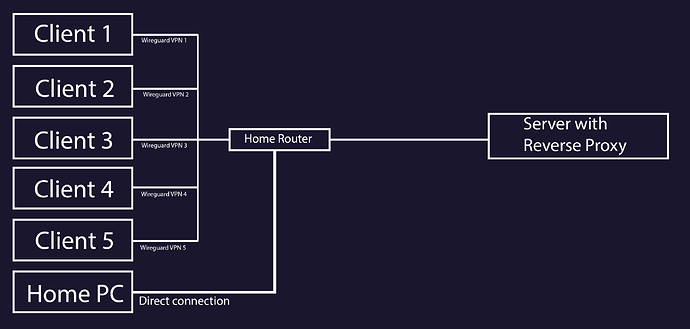

I mainly use the server as decentralized storage so I can access it on the go with my phone and laptop when I’m not accessing it from my PC at home. So far, I’ve been using Wireguard to connect to the server.

Besides that, I also use the server for private music streaming and during our DnD sessions. We use Jellyfin for that.

Our fellow players can access the music library via their own Wireguard VPN connection, and that works quite well too.

So much for the explanation of how the server is mainly used.

First off, I should say that I enjoy trying new things and love gaining practical experience. Sometimes I don’t care whether I gain an advantage from it or not.

For this exact reason, I started researching how to turn the usual HTTP:// address into an HTTPS:// address. Why? Pure curiosity, that’s all.

I’ll briefly summarize my research journey.

To use an HTTPS address, I need an SSL certificate. I was already aware of this fact, but how to obtain one was completely new to me.

After a lot of research, I found out that I can get the SSL certificate from various providers, such as Cloudflare. Additionally, I need my own domain.

If I understand correctly, this would mean my server is then reachable over the web without using a VPN connection.

However, it seems I would then be dependent on Cloudflare to reach the server from outside my home network, is that correct?

For your information: I am aware of Tailscale’s existence. However, I gave myself the challenge of being as independent as possible from other providers (at least from the perspective of a single private individual), and I have no problem investing more work into something and keeping it up to date.

The thought of not being able to access the server anymore because another provider, e.g., Tailscale, is having technical problems is not exactly my preference.

To put my question very briefly: Does the server have to be available over the web to have an SSL certificate, and if so, can I still access the server via a VPN connection in case of an outage?

Thank you so much in advance for your answers!

Gévarred