Hi everybody,

this is a follow-up thread of System extremely slow after stopping a large copy between datasets which was more about error search, while for this topic I am looking for an explanation for my iostat numbers.

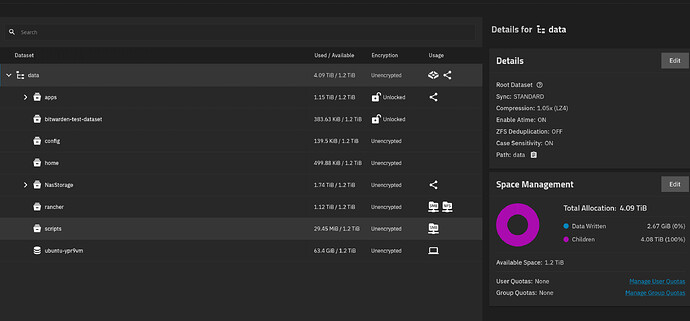

I was doing a rather large data transfer (1.2 GB) within one RAIDZ1 pool consisting of 4x2TB SSDs (Teamgroup T-FORCE, so consumer SSDs with TLC cells and a SLC cache), which became slower and slower over time until it almost transferred no data anymore.

At the same time the system and especially the GUI became almost unusable.

After a while I discovered that was probably because one disk showed really high latencies (data pool and sda-sdd are relevant here):

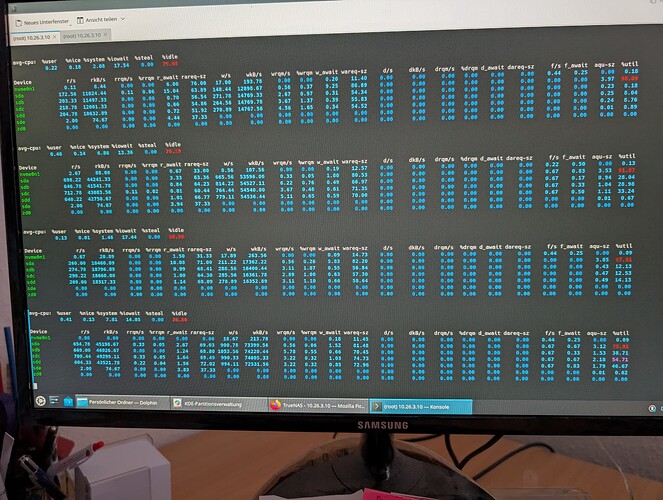

iostat -sxy 10

Linux 6.12.33-production+truenas (truenas) 01/23/26 _x86_64_ (12 CPU)

avg-cpu: %user %nice %system %iowait %steal %idle

0.19 0.00 1.38 20.20 0.00 78.23

Device tps kB/s rqm/s await areq-sz aqu-sz %util

nvme0n1 17.60 199.60 0.00 0.19 11.34 0.00 0.16

sda 142.40 7732.00 2.30 0.41 54.30 0.06 2.44

sdb 93.70 6710.00 2.70 37.09 71.61 3.49 95.24

sdc 167.70 9678.80 1.90 0.78 57.71 0.13 4.12

sdd 152.40 9476.40 1.80 0.56 62.18 0.08 3.20

sde 0.00 0.00 0.00 0.00 0.00 0.00 0.00

sdf 0.00 0.00 0.00 0.00 0.00 0.00 0.00

zd0 0.00 0.00 0.00 0.00 0.00 0.00 0.00

zpool iostat -yl 9 1 truenas: Fri Jan 23 15:05:32 2026

capacity operations bandwidth total_wait disk_wait syncq_wait asyncq_wait scrub trim rebuild

pool alloc free read write read write read write read write read write read write wait wait wait

---------- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- -----

boot-pool 21.4G 195G 0 17 0 292K - 869us - 289us - 832ns - 641us - - -

data 4.90T 2.53T 22 5 1.13M 387K 707ms 25s 124ms 2s - - 600ms 22s - - -

---------- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- ----- -----

My expectation was that this drive was having a hardware issue, and I felt confirmed after it completely failed at some point during the transfer, showing this error:

Pool data state is ONLINE: One or more devices are faulted in response to persistent errors. Sufficient replicas exist for the pool to continue functioning in a degraded state.

The following devices are not healthy:

- Disk T-FORCE_2TB TPBF2301030030100448 is FAULTED

So I replaced the SSD and all SATA cables, and eventually I was able to complete the transfer with reasonable speeds (i think it was around 20Mbit/s in the end).

However, even with the new drive, I discovered that the system was showing high utilization for a different drive now:

(Sorry I wasn’t able to copy the output).

To me, this looked looked like a bottleneck to what could be much faster otherwise.

I’ve seen this highly imbalanced utilization/ waiting times distribution for the whole process, but now it was a different drive (not the new) that looked faulty, which was not salient before.

This time it wasn’t disconnected by TrueNAS, but I wonder if those outliers are something that I should take seriously?