Thanks for your reply @Arwen,

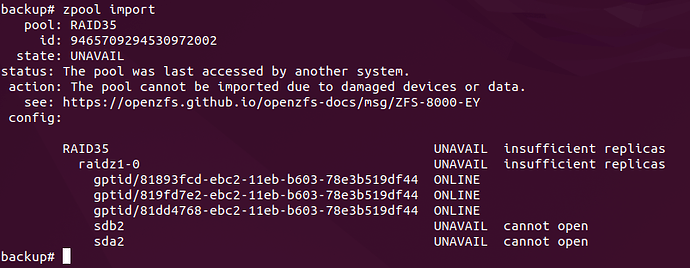

Both sda2 and sdb2 are connected and respond to hardware checks, but the pool is missing them. I run de command as you suggest:

backup# zpool import

pool: RAID35

id: 9465709294530972002

state: UNAVAIL

status: The pool was last accessed by another system.

action: The pool cannot be imported due to damaged devices or data.

see: https://openzfs.github.io/openzfs-docs/msg/ZFS-8000-EY

config:

RAID35 UNAVAIL insufficient replicas

raidz1-0 UNAVAIL insufficient replicas

gptid/81893fcd-ebc2-11eb-b603-78e3b519df44 ONLINE

gptid/819fd7e2-ebc2-11eb-b603-78e3b519df44 ONLINE

gptid/81dd4768-ebc2-11eb-b603-78e3b519df44 ONLINE

sdb2 UNAVAIL cannot open

sda2

backup# zpool import -f -R /mnt RAID35

cannot import 'RAID35': no such pool or dataset

Destroy and re-create the pool from

a backup source.

backup# gpart show

=> 40 976773088 ada0 GPT (466G)

40 532480 1 efi (260M)

532520 33554432 3 freebsd-swap (16G)

34086952 942669824 2 freebsd-zfs (450G)

976756776 16352 - free - (8.0M)

=> 40 7814037088 ada1 GPT (3.6T)

40 88 - free - (44K)

128 4194304 1 freebsd-swap (2.0G)

4194432 7809842696 2 freebsd-zfs (3.6T)

=> 40 7814037088 ada2 GPT (3.6T)

40 88 - free - (44K)

128 4194304 1 freebsd-swap (2.0G)

4194432 7809842696 2 freebsd-zfs (3.6T)

=> 40 7814037088 ada3 GPT (3.6T)

40 88 - free - (44K)

128 4194304 1 freebsd-swap (2.0G)

4194432 7809842696 2 freebsd-zfs (3.6T)

=> 40 7814037088 ada4 GPT (3.6T)

40 88 - free - (44K)

128 4194304 1 freebsd-swap (2.0G)

4194432 7809842696 2 freebsd-zfs (3.6T)

=> 40 7814037088 ada5 GPT (3.6T)

40 88 - free - (44K)

128 4194304 1 freebsd-swap (2.0G)

4194432 7809842696 2 freebsd-zfs (3.6T)

backup# gpart status

Name Status Components

ada0p1 OK ada0

ada0p2 OK ada0

ada0p3 OK ada0

ada1p1 OK ada1

ada1p2 OK ada1

ada2p1 OK ada2

ada2p2 OK ada2

ada3p1 OK ada3

ada3p2 OK ada3

ada4p1 OK ada4

ada4p2 OK ada4

ada5p1 OK ada5

ada5p2 OK ada5

backup# gpart list

Geom name: ada0

modified: false

state: OK

fwheads: 16

fwsectors: 63

last: 976773127

first: 40

entries: 128

scheme: GPT

Providers:

1. Name: ada0p1

Mediasize: 272629760 (260M)

Sectorsize: 512

Stripesize: 4096

Stripeoffset: 0

Mode: r0w0e0

efimedia: HD(1,GPT,9ed682a9-63b1-11ef-8c49-1c1b0d4b30b5,0x28,0x82000)

rawuuid: 9ed682a9-63b1-11ef-8c49-1c1b0d4b30b5

rawtype: c12a7328-f81f-11d2-ba4b-00a0c93ec93b

label: (null)

length: 272629760

offset: 20480

type: efi

index: 1

end: 532519

start: 40

2. Name: ada0p2

Mediasize: 482646949888 (450G)

Sectorsize: 512

Stripesize: 4096

Stripeoffset: 0

Mode: r1w1e1

efimedia: HD(2,GPT,9f1e1df4-63b1-11ef-8c49-1c1b0d4b30b5,0x2082028,0x38300000)

rawuuid: 9f1e1df4-63b1-11ef-8c49-1c1b0d4b30b5

rawtype: 516e7cba-6ecf-11d6-8ff8-00022d09712b

label: (null)

length: 482646949888

offset: 17452519424

type: freebsd-zfs

index: 2

end: 976756775

start: 34086952

3. Name: ada0p3

Mediasize: 17179869184 (16G)

Sectorsize: 512

Stripesize: 4096

Stripeoffset: 0

Mode: r1w1e1

efimedia: HD(3,GPT,9efbed7a-63b1-11ef-8c49-1c1b0d4b30b5,0x82028,0x2000000)

rawuuid: 9efbed7a-63b1-11ef-8c49-1c1b0d4b30b5

rawtype: 516e7cb5-6ecf-11d6-8ff8-00022d09712b

label: (null)

length: 17179869184

offset: 272650240

type: freebsd-swap

index: 3

end: 34086951

start: 532520

Consumers:

1. Name: ada0

Mediasize: 500107862016 (466G)

Sectorsize: 512

Stripesize: 4096

Stripeoffset: 0

Mode: r2w2e4

Geom name: ada1

modified: false

state: OK

fwheads: 16

fwsectors: 63

last: 7814037127

first: 40

entries: 128

scheme: GPT

Providers:

1. Name: ada1p1

Mediasize: 2147483648 (2.0G)

Sectorsize: 512

Stripesize: 4096

Stripeoffset: 0

Mode: r0w0e0

efimedia: HD(1,GPT,8114ac1d-ebc2-11eb-b603-78e3b519df44,0x80,0x400000)

rawuuid: 8114ac1d-ebc2-11eb-b603-78e3b519df44

rawtype: 516e7cb5-6ecf-11d6-8ff8-00022d09712b

label: (null)

length: 2147483648

offset: 65536

type: freebsd-swap

index: 1

end: 4194431

start: 128

2. Name: ada1p2

Mediasize: 3998639460352 (3.6T)

Sectorsize: 512

Stripesize: 4096

Stripeoffset: 0

Mode: r0w0e0

efimedia: HD(2,GPT,81893fcd-ebc2-11eb-b603-78e3b519df44,0x400080,0x1d180be08)

rawuuid: 81893fcd-ebc2-11eb-b603-78e3b519df44

rawtype: 516e7cba-6ecf-11d6-8ff8-00022d09712b

label: (null)

length: 3998639460352

offset: 2147549184

type: freebsd-zfs

index: 2

end: 7814037127

start: 4194432

Consumers:

1. Name: ada1

Mediasize: 4000787030016 (3.6T)

Sectorsize: 512

Stripesize: 4096

Stripeoffset: 0

Mode: r0w0e0

Geom name: ada2

modified: false

state: OK

fwheads: 16

fwsectors: 63

last: 7814037127

first: 40

entries: 128

scheme: GPT

Providers:

1. Name: ada2p1

Mediasize: 2147483648 (2.0G)

Sectorsize: 512

Stripesize: 4096

Stripeoffset: 0

Mode: r0w0e0

efimedia: HD(1,GPT,811f648b-ebc2-11eb-b603-78e3b519df44,0x80,0x400000)

rawuuid: 811f648b-ebc2-11eb-b603-78e3b519df44

rawtype: 516e7cb5-6ecf-11d6-8ff8-00022d09712b

label: (null)

length: 2147483648

offset: 65536

type: freebsd-swap

index: 1

end: 4194431

start: 128

2. Name: ada2p2

Mediasize: 3998639460352 (3.6T)

Sectorsize: 512

Stripesize: 4096

Stripeoffset: 0

Mode: r0w0e0

efimedia: HD(2,GPT,819fd7e2-ebc2-11eb-b603-78e3b519df44,0x400080,0x1d180be08)

rawuuid: 819fd7e2-ebc2-11eb-b603-78e3b519df44

rawtype: 516e7cba-6ecf-11d6-8ff8-00022d09712b

label: (null)

length: 3998639460352

offset: 2147549184

type: freebsd-zfs

index: 2

end: 7814037127

start: 4194432

Consumers:

1. Name: ada2

Mediasize: 4000787030016 (3.6T)

Sectorsize: 512

Stripesize: 4096

Stripeoffset: 0

Mode: r0w0e0

Geom name: ada3

modified: false

state: OK

fwheads: 16

fwsectors: 63

last: 7814037127

first: 40

entries: 128

scheme: GPT

Providers:

1. Name: ada3p1

Mediasize: 2147483648 (2.0G)

Sectorsize: 512

Stripesize: 4096

Stripeoffset: 0

Mode: r0w0e0

efimedia: HD(1,GPT,81b84b67-ebc2-11eb-b603-78e3b519df44,0x80,0x400000)

rawuuid: 81b84b67-ebc2-11eb-b603-78e3b519df44

rawtype: 516e7cb5-6ecf-11d6-8ff8-00022d09712b

label: (null)

length: 2147483648

offset: 65536

type: freebsd-swap

index: 1

end: 4194431

start: 128

2. Name: ada3p2

Mediasize: 3998639460352 (3.6T)

Sectorsize: 512

Stripesize: 4096

Stripeoffset: 0

Mode: r0w0e0

efimedia: HD(2,GPT,81dd4768-ebc2-11eb-b603-78e3b519df44,0x400080,0x1d180be08)

rawuuid: 81dd4768-ebc2-11eb-b603-78e3b519df44

rawtype: 516e7cba-6ecf-11d6-8ff8-00022d09712b

label: (null)

length: 3998639460352

offset: 2147549184

type: freebsd-zfs

index: 2

end: 7814037127

start: 4194432

Consumers:

1. Name: ada3

Mediasize: 4000787030016 (3.6T)

Sectorsize: 512

Stripesize: 4096

Stripeoffset: 0

Mode: r0w0e0

Geom name: ada4

modified: false

state: OK

fwheads: 16

fwsectors: 63

last: 7814037127

first: 40

entries: 128

scheme: GPT

Providers:

1. Name: ada4p1

Mediasize: 2147483648 (2.0G)

Sectorsize: 512

Stripesize: 4096

Stripeoffset: 0

Mode: r0w0e0

efimedia: HD(1,GPT,82a0763b-ebc2-11eb-b603-78e3b519df44,0x80,0x400000)

rawuuid: 82a0763b-ebc2-11eb-b603-78e3b519df44

rawtype: 516e7cb5-6ecf-11d6-8ff8-00022d09712b

label: (null)

length: 2147483648

offset: 65536

type: freebsd-swap

index: 1

end: 4194431

start: 128

2. Name: ada4p2

Mediasize: 3998639460352 (3.6T)

Sectorsize: 512

Stripesize: 4096

Stripeoffset: 0

Mode: r0w0e0

efimedia: HD(2,GPT,82b868b6-ebc2-11eb-b603-78e3b519df44,0x400080,0x1d180be08)

rawuuid: 82b868b6-ebc2-11eb-b603-78e3b519df44

rawtype: 516e7cba-6ecf-11d6-8ff8-00022d09712b

label: (null)

length: 3998639460352

offset: 2147549184

type: freebsd-zfs

index: 2

end: 7814037127

start: 4194432

Consumers:

1. Name: ada4

Mediasize: 4000787030016 (3.6T)

Sectorsize: 512

Stripesize: 4096

Stripeoffset: 0

Mode: r0w0e0

Geom name: ada5

modified: false

state: OK

fwheads: 16

fwsectors: 63

last: 7814037127

first: 40

entries: 128

scheme: GPT

Providers:

1. Name: ada5p1

Mediasize: 2147483648 (2.0G)

Sectorsize: 512

Stripesize: 4096

Stripeoffset: 0

Mode: r0w0e0

efimedia: HD(1,GPT,82968a9d-ebc2-11eb-b603-78e3b519df44,0x80,0x400000)

rawuuid: 82968a9d-ebc2-11eb-b603-78e3b519df44

rawtype: 516e7cb5-6ecf-11d6-8ff8-00022d09712b

label: (null)

length: 2147483648

offset: 65536

type: freebsd-swap

index: 1

end: 4194431

start: 128

2. Name: ada5p2

Mediasize: 3998639460352 (3.6T)

Sectorsize: 512

Stripesize: 4096

Stripeoffset: 0

Mode: r0w0e0

efimedia: HD(2,GPT,82afd059-ebc2-11eb-b603-78e3b519df44,0x400080,0x1d180be08)

rawuuid: 82afd059-ebc2-11eb-b603-78e3b519df44

rawtype: 516e7cba-6ecf-11d6-8ff8-00022d09712b

label: (null)

length: 3998639460352

offset: 2147549184

type: freebsd-zfs

index: 2

end: 7814037127

start: 4194432

Consumers:

1. Name: ada5

Mediasize: 4000787030016 (3.6T)

Sectorsize: 512

Stripesize: 4096

Stripeoffset: 0

Mode: r0w0e0

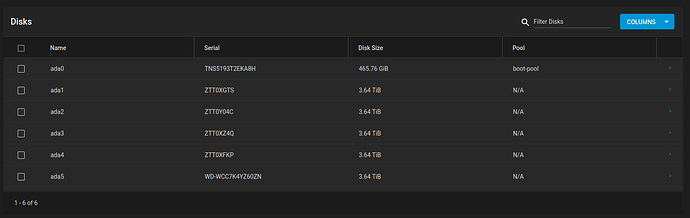

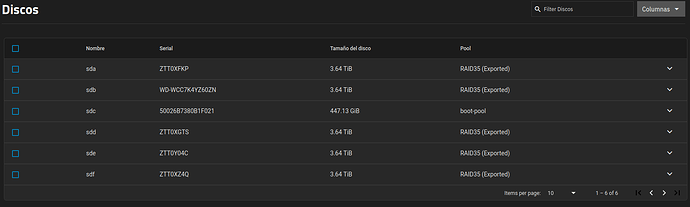

I did some smartctl tests on both disks without errors. I’m wondering why the zpool import command show sda2 and sdb2 on the missing disks, instead of the gptid. I noticed after the upgrade to SCALE that all disks were renamed to linux devices: sda, sdb, sdc, etc, but then I reinstalled CORE 13 and imported the backup config, and the missing disks still have the linux device names, but the attached ones are using the gptid.

Any ideas? Will you recommend to upgrade again to SCALE to manage the situation?, Or better try to solve first the missing disks from CORE? Does SCALE accept a backup config from CORE?

After comparing the gptids from the previous commands and the list for disks, I think that the pools is missing ada4p2 and ada5p2

gptid/82b868b6-ebc2-11eb-b603-78e3b519df44 N/A ada4p2

gptid/82afd059-ebc2-11eb-b603-78e3b519df44 N/A ada5p2

but it shows sda and sdb on the zpool import command.

Both ada4 and ada5 pass the smartctl tests:

backup# smartctl -a /dev/ada4

smartctl 7.2 2021-09-14 r5236 [FreeBSD 13.1-RELEASE-p9 amd64] (local build)

Copyright (C) 2002-20, Bruce Allen, Christian Franke, www.smartmontools.org

=== START OF INFORMATION SECTION ===

Model Family: Seagate BarraCuda 3.5 (SMR)

Device Model: ST4000DM004-2CV104

Serial Number: ZTT0XFKP

LU WWN Device Id: 5 000c50 0c905afe7

Firmware Version: 0001

User Capacity: 4,000,787,030,016 bytes [4.00 TB]

Sector Sizes: 512 bytes logical, 4096 bytes physical

Rotation Rate: 5425 rpm

Form Factor: 3.5 inches

Device is: In smartctl database [for details use: -P show]

ATA Version is: ACS-3 T13/2161-D revision 5

SATA Version is: SATA 3.1, 6.0 Gb/s (current: 6.0 Gb/s)

Local Time is: Thu Aug 29 09:49:44 2024 CEST

SMART support is: Available - device has SMART capability.

SMART support is: Enabled

=== START OF READ SMART DATA SECTION ===

SMART overall-health self-assessment test result: PASSED

General SMART Values:

Offline data collection status: (0x00) Offline data collection activity

was never started.

Auto Offline Data Collection: Disabled.

Self-test execution status: ( 0) The previous self-test routine completed

without error or no self-test has ever

been run.

Total time to complete Offline

data collection: ( 0) seconds.

Offline data collection

capabilities: (0x73) SMART execute Offline immediate.

Auto Offline data collection on/off support.

Suspend Offline collection upon new

command.

No Offline surface scan supported.

Self-test supported.

Conveyance Self-test supported.

Selective Self-test supported.

SMART capabilities: (0x0003) Saves SMART data before entering

power-saving mode.

Supports SMART auto save timer.

Error logging capability: (0x01) Error logging supported.

General Purpose Logging supported.

Short self-test routine

recommended polling time: ( 1) minutes.

Extended self-test routine

recommended polling time: ( 492) minutes.

Conveyance self-test routine

recommended polling time: ( 2) minutes.

SCT capabilities: (0x30a5) SCT Status supported.

SCT Data Table supported.

SMART Attributes Data Structure revision number: 10

Vendor Specific SMART Attributes with Thresholds:

ID# ATTRIBUTE_NAME FLAG VALUE WORST THRESH TYPE UPDATED WHEN_FAILED RAW_VALUE

1 Raw_Read_Error_Rate 0x000f 080 064 006 Pre-fail Always - 92298627

3 Spin_Up_Time 0x0003 096 096 000 Pre-fail Always - 0

4 Start_Stop_Count 0x0032 100 100 020 Old_age Always - 118

5 Reallocated_Sector_Ct 0x0033 100 100 010 Pre-fail Always - 0

7 Seek_Error_Rate 0x000f 086 060 045 Pre-fail Always - 409320868

9 Power_On_Hours 0x0032 069 069 000 Old_age Always - 27649h+07m+13.436s

10 Spin_Retry_Count 0x0013 100 100 097 Pre-fail Always - 0

12 Power_Cycle_Count 0x0032 100 100 020 Old_age Always - 118

183 Runtime_Bad_Block 0x0032 100 100 000 Old_age Always - 0

184 End-to-End_Error 0x0032 100 100 099 Old_age Always - 0

187 Reported_Uncorrect 0x0032 100 100 000 Old_age Always - 0

188 Command_Timeout 0x0032 100 100 000 Old_age Always - 0 0 0

189 High_Fly_Writes 0x003a 100 100 000 Old_age Always - 0

190 Airflow_Temperature_Cel 0x0022 061 059 040 Old_age Always - 39 (Min/Max 39/41)

191 G-Sense_Error_Rate 0x0032 100 100 000 Old_age Always - 0

192 Power-Off_Retract_Count 0x0032 100 100 000 Old_age Always - 1136

193 Load_Cycle_Count 0x0032 100 100 000 Old_age Always - 1638

194 Temperature_Celsius 0x0022 039 041 000 Old_age Always - 39 (0 22 0 0 0)

195 Hardware_ECC_Recovered 0x001a 080 064 000 Old_age Always - 92298627

197 Current_Pending_Sector 0x0012 100 100 000 Old_age Always - 0

198 Offline_Uncorrectable 0x0010 100 100 000 Old_age Offline - 0

199 UDMA_CRC_Error_Count 0x003e 200 200 000 Old_age Always - 0

240 Head_Flying_Hours 0x0000 100 253 000 Old_age Offline - 27346h+38m+31.451s

241 Total_LBAs_Written 0x0000 100 253 000 Old_age Offline - 7925177655

242 Total_LBAs_Read 0x0000 100 253 000 Old_age Offline - 175827905194

SMART Error Log Version: 1

No Errors Logged

SMART Self-test log structure revision number 1

Num Test_Description Status Remaining LifeTime(hours) LBA_of_first_error

# 1 Short offline Completed without error 00% 27589 -

# 2 Short offline Completed without error 00% 27588 -

SMART Selective self-test log data structure revision number 1

SPAN MIN_LBA MAX_LBA CURRENT_TEST_STATUS

1 0 0 Not_testing

2 0 0 Not_testing

3 0 0 Not_testing

4 0 0 Not_testing

5 0 0 Not_testing

Selective self-test flags (0x0):

After scanning selected spans, do NOT read-scan remainder of disk.

If Selective self-test is pending on power-up, resume after 0 minute delay.

backup# smartctl -a /dev/ada5

smartctl 7.2 2021-09-14 r5236 [FreeBSD 13.1-RELEASE-p9 amd64] (local build)

Copyright (C) 2002-20, Bruce Allen, Christian Franke, www.smartmontools.org

=== START OF INFORMATION SECTION ===

Model Family: Western Digital Blue

Device Model: WDC WD40EZRZ-00GXCB0

Serial Number: WD-WCC7K4YZ60ZN

LU WWN Device Id: 5 0014ee 2b9070052

Firmware Version: 80.00A80

User Capacity: 4,000,787,030,016 bytes [4.00 TB]

Sector Sizes: 512 bytes logical, 4096 bytes physical

Rotation Rate: 5400 rpm

Form Factor: 3.5 inches

Device is: In smartctl database [for details use: -P show]

ATA Version is: ACS-3 T13/2161-D revision 5

SATA Version is: SATA 3.1, 6.0 Gb/s (current: 6.0 Gb/s)

Local Time is: Thu Aug 29 09:48:55 2024 CEST

SMART support is: Available - device has SMART capability.

SMART support is: Enabled

=== START OF READ SMART DATA SECTION ===

SMART overall-health self-assessment test result: PASSED

General SMART Values:

Offline data collection status: (0x82) Offline data collection activity

was completed without error.

Auto Offline Data Collection: Enabled.

Self-test execution status: ( 0) The previous self-test routine completed

without error or no self-test has ever

been run.

Total time to complete Offline

data collection: (44340) seconds.

Offline data collection

capabilities: (0x7b) SMART execute Offline immediate.

Auto Offline data collection on/off support.

Suspend Offline collection upon new

command.

Offline surface scan supported.

Self-test supported.

Conveyance Self-test supported.

Selective Self-test supported.

SMART capabilities: (0x0003) Saves SMART data before entering

power-saving mode.

Supports SMART auto save timer.

Error logging capability: (0x01) Error logging supported.

General Purpose Logging supported.

Short self-test routine

recommended polling time: ( 2) minutes.

Extended self-test routine

recommended polling time: ( 470) minutes.

Conveyance self-test routine

recommended polling time: ( 5) minutes.

SCT capabilities: (0x3035) SCT Status supported.

SCT Feature Control supported.

SCT Data Table supported.

SMART Attributes Data Structure revision number: 16

Vendor Specific SMART Attributes with Thresholds:

ID# ATTRIBUTE_NAME FLAG VALUE WORST THRESH TYPE UPDATED WHEN_FAILED RAW_VALUE

1 Raw_Read_Error_Rate 0x002f 200 200 051 Pre-fail Always - 0

3 Spin_Up_Time 0x0027 194 160 021 Pre-fail Always - 5291

4 Start_Stop_Count 0x0032 099 099 000 Old_age Always - 1407

5 Reallocated_Sector_Ct 0x0033 200 200 140 Pre-fail Always - 0

7 Seek_Error_Rate 0x002e 200 200 000 Old_age Always - 0

9 Power_On_Hours 0x0032 038 038 000 Old_age Always - 45622

10 Spin_Retry_Count 0x0032 100 100 000 Old_age Always - 0

11 Calibration_Retry_Count 0x0032 100 100 000 Old_age Always - 0

12 Power_Cycle_Count 0x0032 100 100 000 Old_age Always - 171

192 Power-Off_Retract_Count 0x0032 200 200 000 Old_age Always - 91

193 Load_Cycle_Count 0x0032 181 181 000 Old_age Always - 59948

194 Temperature_Celsius 0x0022 114 100 000 Old_age Always - 36

196 Reallocated_Event_Count 0x0032 200 200 000 Old_age Always - 0

197 Current_Pending_Sector 0x0032 200 200 000 Old_age Always - 0

198 Offline_Uncorrectable 0x0030 200 200 000 Old_age Offline - 0

199 UDMA_CRC_Error_Count 0x0032 200 200 000 Old_age Always - 0

200 Multi_Zone_Error_Rate 0x0008 200 200 000 Old_age Offline - 0

SMART Error Log Version: 1

No Errors Logged

SMART Self-test log structure revision number 1

Num Test_Description Status Remaining LifeTime(hours) LBA_of_first_error

# 1 Short offline Completed without error 00% 45563 -

# 2 Short offline Completed without error 00% 45562 -

SMART Selective self-test log data structure revision number 1

SPAN MIN_LBA MAX_LBA CURRENT_TEST_STATUS

1 0 0 Not_testing

2 0 0 Not_testing

3 0 0 Not_testing

4 0 0 Not_testing

5 0 0 Not_testing

Selective self-test flags (0x0):

After scanning selected spans, do NOT read-scan remainder of disk.

If Selective self-test is pending on power-up, resume after 0 minute delay.

Thanks, R…