Hmm… is this with standard Apps? If so, we’d appreciate a bug report if you can help us diagnose

Sorry sir, can’t do it yet ![]()

Also yes - it is standard apps

I used the Update button to grab the new version (from 24.04.1.1 to 24.04.2) and the first time it failed with a similar failure screen listed above however I also noted that the entire download was not on the machine (expected size was far off from actual size). I rebooted the machine and tried again, success.

I don’t know why TrueNAS started to perform the installation without a complete download but that was the indications. Wish I had thought about a screen capture but I did not. Sorry about that. I’m sure that if it is a problem, others will have it.

Otherwise 24.04.2 seems to be working. I will let it run for a while to see how things go.

Edit: my little script still seams to work.

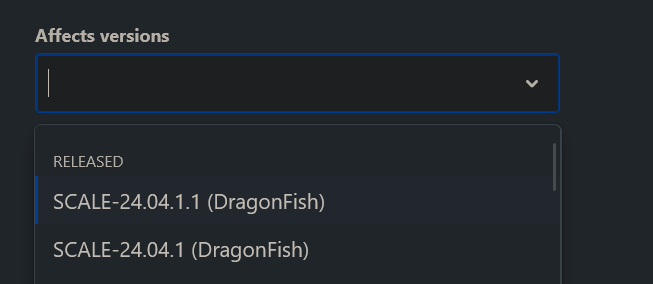

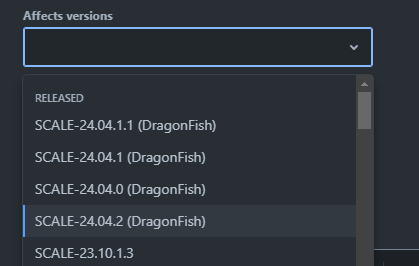

Maybe if you scroll down? ![]()

Ughh, had to clear cache for it to appear. PBCAK on my end.

@Captain_Morgan NAS-130006 created

Hi - not sure what changed between 24.04.0 and 24.04.1/24.04.1.1/24.04.2 - my Thunderbolt expansion drives do not get recognized after the upgrade (either of the 3 updates), I was hoping 24.04.2 with the kernel update will fix the issue form 24.04.1/24.04.1.1 but apparently it did not. I posted about the issue earlier but did not find any solution Thunderbolt 3 JBOD Support in Truenas. I did check that if the kernel has the thunderbolt by running lsmod | grep thunderbolt and I do see its there, not sure whats the issue is…

Upgraded my main NAS this morning without immediately-apparent issues.

I made a suggestion quite awhile back that any Truenas upgrade, on clicking the Update button the first thing it does after asking to make a copy of the config file, is to reboot the running system, then verify the update file, then perform the update, then reboot after the update is complete. Many systems, not only those running Truenas benefit from a freshly booted system to clear and make the update environment fresh before proceeding. I think ixSystems would benefit from this with less edge issues causing failures during system updates. After all it is a system update.

Did my zpool checkpoints, updated, was running with no issues thus far. Very easy update.

Its not a bad suggestion and would be more reliable. However, it probably helps a very small % of updates and doubles the amount of downtime. Users can follow this process if they want.

For our Enterprise users the double downtime wouldn’t be acceptable, so we’d prefer to focus on making these updates as reliable as possible.

Tried to update at least 4 times from 24.04.1.1. Always the same error on booting:

Loading Linux 6.6.32-production+truenas …

Loading initial ramdisk …

error: checksum verification failed.Press any key to continue…

-production+truenas #1

[ 0.511975] Hardware name: HP ProLiant MicroServer Gen8, BIOS J06 04/04/2019

[ 0.512047] Call Trace:

[ 0.512114]

[ 0.512181] dump_stack_lvl+0x47/0x60

[ 0.512254] panic+0x339/0x350

[ 0.512324] mount_root_generic+0x1ac/0x330

[ 0.512395] prepare_namespace+0x64/0x280

[ 0.512465] kernel_init_freeable+0x41c/0x470

[ 0.512535] ? __pfx_kernel_init+0x10/0x10

[ 0.512606] kernel_init+0x1a/0x1c0

[ 0.512674] ret_from_fork+0x34/0x50

[ 0.512744] ? __pfx_kernel_init+0x10/0x10

[ 0.512813] ret_from_fork_asm+0x1b/0x30

[ 0.512882]

[ 0.512987] Kernel Offset: 0x16a00000 from 0xffffffff81000000 (relocation range: 0xffffffff80000000-0xffffffffbfffffff)

[ 0.517286] ERST: [Firmware Warn]: Firmware does not respond in time.

[ 0.520028] ERST: [Firmware Warn]: Firmware does not respond in time.

[ 0.522724] ERST: [Firmware Warn]: Firmware does not respond in time.

[ 0.525389] ERST: [Firmware Warn]: Firmware does not respond in time.

[ 0.526838] pstore: backend (erst) writing error (-28)

[ 0.526908] —[ end Kernel panic - not syncing: VFS: Unable to mount root fs on unknown-block(0,0) ]—

Going back to 24.04 works fine.

My system:

- HPE Proliant MicroServer Gen8

- Xenon E3 1220

- 12 GB RAM

Booting from an SSD connected through SSD-USB adaptor to internal USB slot.

Working with FreeNAS since 2018, migrated to Scale with 23.10.1

@Captain_Morgan

It could be made an option was more to my thinking. The dialog that pops up when the update button is selected to save the config file, could also have a checkbox [Reboot System] A statement like “The system has been in service for awhile. A system reboot is suggested before starting the update process.” could accompany the new checkbox.

That would leave the choice to reboot first to a fresh system up to the user.

I see where the no reboot twice comes from, but I have also worked for some very large corporations where the IT dept would decide following policy how and when server updates are to be performed. Some servers being down for an update during production could cost over $15,000 a minute in lost production and so would be put off until a suitable non-production time block.

Just upgraded from 23.10.2 to 24.04.2 and it went extremely smoothly. All apps came up just fine, and the UI seems a mite faster.

Suggest you start a separate thread. Its seems to be a unique problem and may be hardware related.

Have you tried downloading the update again… the checksum verification failure might imply the file was corrupted before saving.

Can you verify the boot drive is healthy? Not too full… no errors.

RAM is ECC protected?

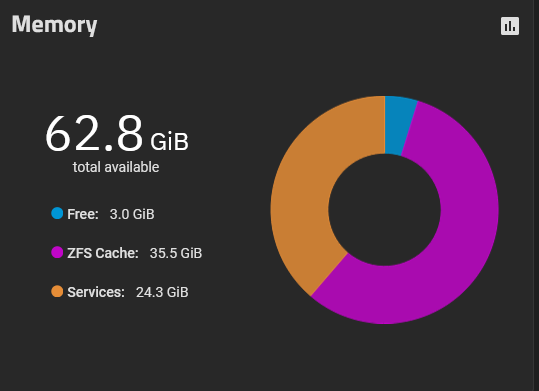

Upgrade from 24.04 to 24.04.02 went well, but I am seeing nearly half my available memory allocated to Services.

I only have the StorJ App runnning, but did not see this previously with patched 24.04 version. Would welcome any steps to diagnose what is grabbing so much of the RAM here rather than allocated to Cache.

Thanks

CC

I assume there is no real issue. Suggest you start another thread and see what diagnostics you can provide.

SCALE 24.04.2 now has over 20,000 users and this issue has not come up.

I’ve just performed the same update. Very painless… except for the things I’ll capture in a separate thread

I am now getting samba connection issues:

[2024/07/17 13:23:32.793863, 0] …/…/source3/smbd/msdfs.c:119(parse_dfs_path_strict)

parse_dfs_path_strict: Hostname truenas._smb._tcp.local is not ours.

[2024/07/17 13:23:32.840095, 0] …/…/source3/smbd/msdfs.c:106(parse_dfs_path_strict)

parse_dfs_path_strict: can’t parse hostname from path \truenas._smb._tcp.local

I successfully connected using “truenas.local” host before. This shouldn’t be a problem:

root@truenas:~# cat /etc/hosts

127.0.0.1 truenas.local truenas

127.0.0.1 localhost

# The following lines are desirable for IPv6 capable hosts

::1 localhost ip6-localhost ip6-loopback

ff02::1 ip6-allnodes

ff02::2 ip6-allrouters

# STATIC ENTRIES

Similarly, my NFS shares also stopped working, getting permission denied.

Switching over to 24.04.1.1 root restores connectivity for both.

Why are you trying to use an mDNS service principal to connect to an SMB server?

truenas._smb._tcp != truenas.local

I am not trying anything, this is TimeMachine connecting in this case, I suppose.

And, importantly, this is not an issue with 24.04.1.1. Everything works as expected and no errors in smb.log.

Also, since NFS also doesn’t work, this is likely a whole different kind of issue.