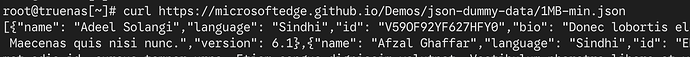

Recently, the middleware keeps failing when checking for image updates. If it’s a network issue, I can still pull the image with docker pull and reach other sites without trouble. Are there any specific tools or logs that can pinpoint the exact cause?

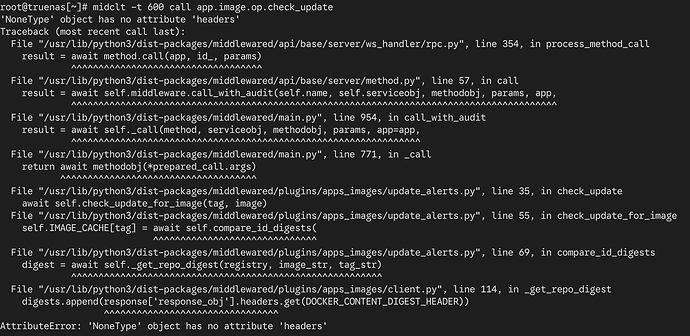

root@truenas[/var/log]# midclt -t 600 call app.image.op.check_update

Connection closed.

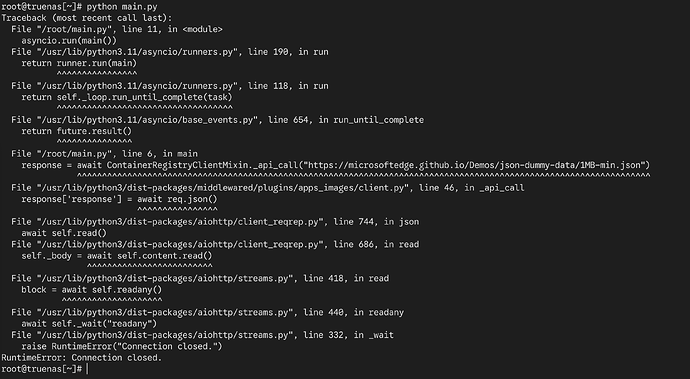

Traceback (most recent call last):

File "/usr/lib/python3/dist-packages/middlewared/api/base/server/ws_handler/rpc.py", line 354, in process_method_call

result = await method.call(app, id_, params)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/lib/python3/dist-packages/middlewared/api/base/server/method.py", line 57, in call

result = await self.middleware.call_with_audit(self.name, self.serviceobj, methodobj, params, app,

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/lib/python3/dist-packages/middlewared/main.py", line 954, in call_with_audit

result = await self._call(method, serviceobj, methodobj, params, app=app,

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/lib/python3/dist-packages/middlewared/main.py", line 771, in _call

return await methodobj(*prepared_call.args)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/lib/python3/dist-packages/middlewared/plugins/apps_images/update_alerts.py", line 35, in check_update

await self.check_update_for_image(tag, image)

File "/usr/lib/python3/dist-packages/middlewared/plugins/apps_images/update_alerts.py", line 54, in check_update_for_image

self.IMAGE_CACHE[tag] = await self.compare_id_digests(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/lib/python3/dist-packages/middlewared/plugins/apps_images/update_alerts.py", line 68, in compare_id_digests

digest = await self._get_repo_digest(registry, image_str, tag_str)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/lib/python3/dist-packages/middlewared/plugins/apps_images/client.py", line 92, in _get_repo_digest

response = await self._get_manifest_response(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/lib/python3/dist-packages/middlewared/plugins/apps_images/client.py", line 69, in _get_manifest_response

response = await self._api_call(manifest_url, headers=headers, mode=mode)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/lib/python3/dist-packages/middlewared/plugins/apps_images/client.py", line 46, in _api_call

response['response'] = await req.json()

^^^^^^^^^^^^^^^^

File "/usr/lib/python3/dist-packages/aiohttp/client_reqrep.py", line 744, in json

await self.read()

File "/usr/lib/python3/dist-packages/aiohttp/client_reqrep.py", line 686, in read

self._body = await self.content.read()

^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/lib/python3/dist-packages/aiohttp/streams.py", line 418, in read

block = await self.readany()

^^^^^^^^^^^^^^^^^^^^

File "/usr/lib/python3/dist-packages/aiohttp/streams.py", line 440, in readany

await self._wait("readany")

File "/usr/lib/python3/dist-packages/aiohttp/streams.py", line 332, in _wait

raise RuntimeError("Connection closed.")

RuntimeError: Connection closed.