I’m sure some people are aware of the problem where when you import an existing pool, truenas prefixes another /mnt on your mounpoint causing /mnt/mnt/yourpoolname

I followed the intstructions from the other posts to manually set my mountpoint to /yourpoolname

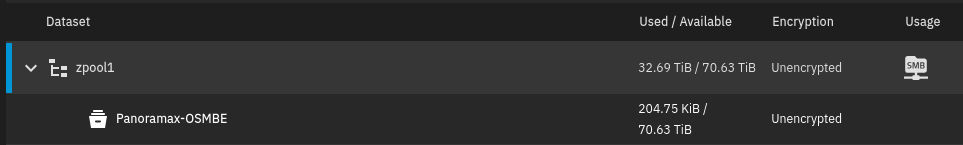

and that does make things appear normally BUT I noticed that the original datasets don’t appear in the Truenas interface where I would expect them.

New datasets do appear though. How come?

- I only see newly created datasets in the Dataset tab of truenas

- smb shares section in the shares tab shows my two existing datasets with paths /mnt/zpool1/OSM images and /mnt/zpool1/other respectively. and they work

They do appear correctly in smb share menu and work?

truenas_admin@truenas[~]$ zfs list

NAME USED AVAIL REFER MOUNTPOINT

boot-pool 3.06G 141G 96K none

boot-pool/.system 2.89M 141G 120K legacy

boot-pool/.system/configs-ae32c386e13840b2bf9c0083275e7941 96K 141G 96K legacy

boot-pool/.system/cores 96K 1024M 96K legacy

boot-pool/.system/netdata-ae32c386e13840b2bf9c0083275e7941 2.11M 141G 2.11M legacy

boot-pool/.system/nfs 112K 141G 112K legacy

boot-pool/.system/samba4 288K 141G 288K legacy

boot-pool/.system/vm 96K 141G 96K legacy

boot-pool/ROOT 3.04G 141G 96K none

boot-pool/ROOT/25.10.1 3.04G 141G 104M legacy

boot-pool/ROOT/25.10.1/audit 1.69M 141G 1.69M /audit

boot-pool/ROOT/25.10.1/conf 7.54M 141G 7.54M /conf

boot-pool/ROOT/25.10.1/data 288K 141G 288K /data

boot-pool/ROOT/25.10.1/etc 7.45M 141G 6.48M /etc

boot-pool/ROOT/25.10.1/home 108K 141G 108K /home

boot-pool/ROOT/25.10.1/mnt 112K 141G 112K /mnt

boot-pool/ROOT/25.10.1/opt 4.65M 141G 4.65M /opt

boot-pool/ROOT/25.10.1/root 140K 141G 140K /root

boot-pool/ROOT/25.10.1/usr 2.88G 141G 2.88G /usr

boot-pool/ROOT/25.10.1/var 43.7M 141G 4.37M /var

boot-pool/ROOT/25.10.1/var/ca-certificates 96K 141G 96K /var/local/ca-certificates

boot-pool/ROOT/25.10.1/var/lib 28.5M 141G 28.1M /var/lib

boot-pool/ROOT/25.10.1/var/lib/incus 96K 141G 96K /var/lib/incus

boot-pool/ROOT/25.10.1/var/log 10.6M 141G 1.74M /var/log

boot-pool/ROOT/25.10.1/var/log/journal 8.87M 141G 8.87M /var/log/journal

boot-pool/grub 9.02M 141G 9.02M legacy

zpool1 32.7T 70.6T 32.7T /mnt/zpool1

zpool1/Panoramax-OSMBE 205K 70.6T 205K /mnt/zpool1/Panoramax-OSMBE

zpool2_less-critical 1.18M 64.2T 162K /mnt/zpool2_less-critical

truenas_admin@truenas[~]$ sudo zfs get -t filesystem -r mountpoint zpool1

[sudo] password for truenas_admin:

NAME PROPERTY VALUE SOURCE

zpool1 mountpoint /mnt/zpool1 local

zpool1/Panoramax-OSMBE mountpoint /mnt/zpool1/Panoramax-OSMBE inherited from zpool1

I do see that I can ‘upgrade’ the zfs on my pool but not sure if that’s the solution to this problem or not.