Hi there!

I am relatively new to TrueNAS (coming from an old QNAP TS-859 Pro+) but so far LOVE it and got the hang of it. My TrueNAS system currently runs on a Ryzen 5 3600 w/ 32GB DDR4-3200 ECC and for storage I have one mirrored enterprise grade SSD VDEV for boot/system, one mirrored NVME VDEV (nvme-pool) for apps, VM and other short lived/fast accessible data and one Ironwolf RAIDZ1 VDEV (ironwolf-pool) consisting at the moment of 3x Ironwolf 4TB.

Even on my old QNAP I’ve always followed the usual 3-2-1 principle, for which I used Rsync until now. On my new TrueNAS setup I’m doing / trying to accomplish the following:

- one local copy in other room (basic Rsync task for mission critical datasets) → working

- one offsite copy in other location (remote replication task to another TrueNAS SCALE system) → in progress

- one offsite copy in cloud (Hetzner Storage Box BX21 w/ Restic) → not yet implemented

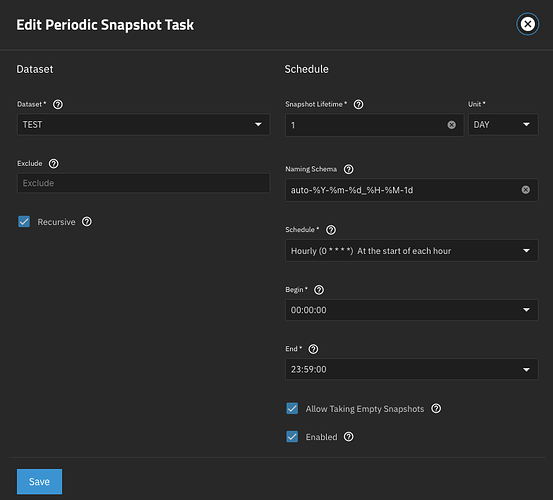

Since I’m relatively new to ZFS and it’s snapshots, I proceeded and set up tiered Snapshots tasks (followed “Capt Stux - TrueNAS Scale: Setting up and using Tiered Snapshots” guide on Youtube, can’t post the link here, probably because my account is too new) like such:

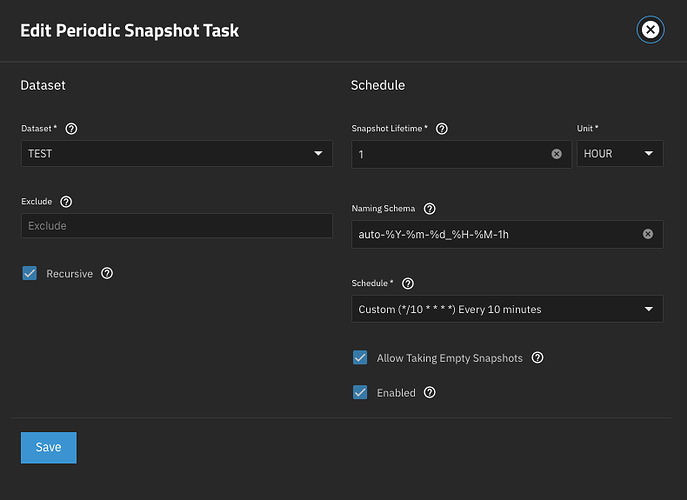

- nvme-pool hourly, recursive, do NOT allow empty, retention 24h

- nvme-pool daily, recursive, allow empty, retention 7 days

- ironwolf-pool daily recursive, allow empty, retention 7 days

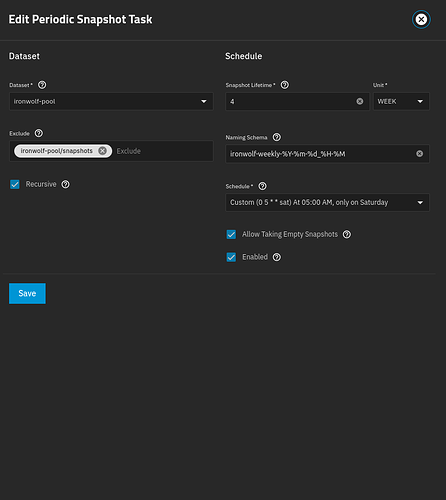

- ironwolf-pool weekly recursive, allow empty, retention 4 weeks

- ironwolf-pool monthly recursive, allow empty, retention 6 months

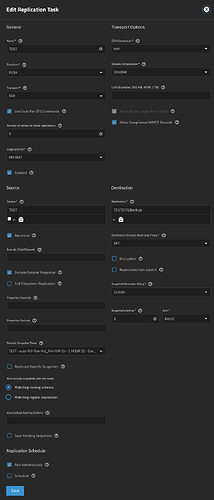

For nvme-pool daily, I have two Replication Tasks, both of which are working just fine:

- LOCAL, copy from nvme-pool to ironwolf-pool

- REMOTE, copy from nvme-pool to remote encrypted dataset on offsite TrueNAS, retention = 2 weeks (double)

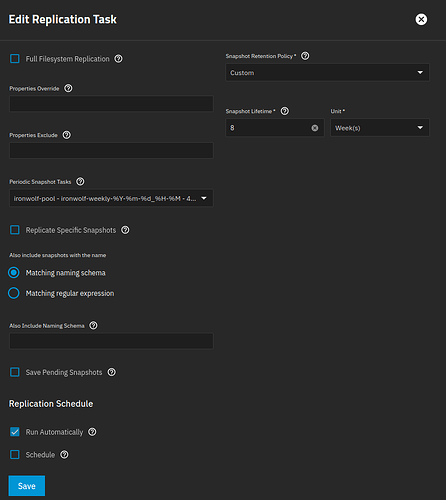

For ironwolf-pool I also have two Replication Tasks, but I’m struggling to get them right:

- REMOTE copy from ironwolf-pool WEEKLY to remote encrypted dataset on offsite TrueNAS, retention = 8 weeks (double of source)

- REMOTE copy from ironwolf-pool MONTHLY to remote encrypted dataset on offsite TrueNAS, retention = 12 months (double of source)

The latter two are semi-working. Every time either of them runs, it ends up removing the existing snapshots on the remote TrueNAS of the other replication task (i.e. monthly removes weekly or vice-versa), eventually leading to an “no incremental base” error on the other replication task.

The destination dataset structure looks like this:

- tank

- replicas

- nvme-pool

- ironwolf-pool

- replicas

The nvme-daily replication task obviously points to tank/replicas/nvme-pool as the destination and both ironpool-weekly / ironpool-monthly point to tank/replicas/ironwolf-pool on the offsite TrueNAS respectively.

Reason I’m not selecting both ironwolf snapshot tasks in the same remote replication task is because I want different retention times for both of them.

Trying to get my head around this for some days now but not able to figure out what I am missing here. Searching Google and the Forum/Community unfortunately also didn’t lead me in the correct direction, neither did the official documentation and/or other guides. Maybe I’m overthinking it too much and I can’t see the solution in front of the forest? ![]()

Any help will be much appreciated! ![]()