OK totally not related but I think I found the root cause and might be a bug or at least worth sharing. Turns out the runaway middleware process seemed to be the TrueNAS client checking for container updates over and over.

etrieved\nfuture: <Task finished name='Task-554' coro=<Middleware.call() done, defined at /usr/lib/python3/dist-packages/middlewared/main.py:990> exception=RuntimeError('Connection closed.')> @cee:{"TNLOG": {"exception": "Traceback (most recent call last):\n File \"/usr/lib/python3/dist-packages/middlewared/main.py\", line 1000, in call\n return await self._call(\n ^^^^^^^^^^^^^^^^^\n File \"/usr/lib/python3/dist-packages/middlewared/main.py\", line 715, in _call\n return await methodobj(*prepared_call.args)\n ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^\n File \"/usr/lib/python3/dist-packages/middlewared/plugins/apps_images/update_alerts.py\", line 35, in check_update\n await self.check_update_for_image(tag, image)\n File \"/usr/lib/python3/dist-packages/middlewared/plugins/apps_images/update_alerts.py\", line 54, in check_update_for_image\n self.IMAGE_CACHE[tag] = await self.compare_id_digests(\n ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^\n File \"/usr/lib/python3/dist-packages/middlewared/plugins/apps_images/update_alerts.py\", line 68, in compare_id_digests\n digest = await self._get_repo_digest(registry, image_str, tag_str)\n ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^\n File \"/usr/lib/python3/dist-packages/middlewared/plugins/apps_images/client.py\", line 92, in _get_repo_digest\n response = await self._get_manifest_response(\n ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^\n File \"/usr/lib/python3/dist-packages/middlewared/plugins/apps_images/client.py\", line 76, in _get_manifest_response\n response = await self._api_call(manifest_url, headers=headers, mode=mode)\n ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^\n File \"/usr/lib/python3/dist-packages/middlewared/plugins/apps_images/client.py\", line 46, in _api_call\n response['response'] = await req.json()\n ^^^^^^^^^^^^^^^^\n File \"/usr/lib/python3/dist-packages/aiohttp/client_reqrep.py\", line 1272, in json\n await self.read()\n File \"/usr/lib/python3/dist-packages/aiohttp/client_reqrep.py\", line 1214, in read\n self._body = await self.content.read()\n ^^^^^^^^^^^^^^^^^^^^^^^^^\n File \"/usr/lib/python3/dist-packages/aiohttp/streams.py\", line 386, in read\n block = await self.readany()\n ^^^^^^^^^^^^^^^^^^^^\n File \"/usr/lib/python3/dist-packages/aiohttp/streams.py\", line 408, in readany\n await self._wait(\"readany\")\n File \"/usr/lib/python3/dist-packages/aiohttp/streams.py\", line 300, in _wait\n raise RuntimeError(\"Connection closed.\")\nRuntimeError: Connection closed.", "type": "PYTHON_EXCEPTION", "time": "2025-08-02 18:24:15.356113"}}

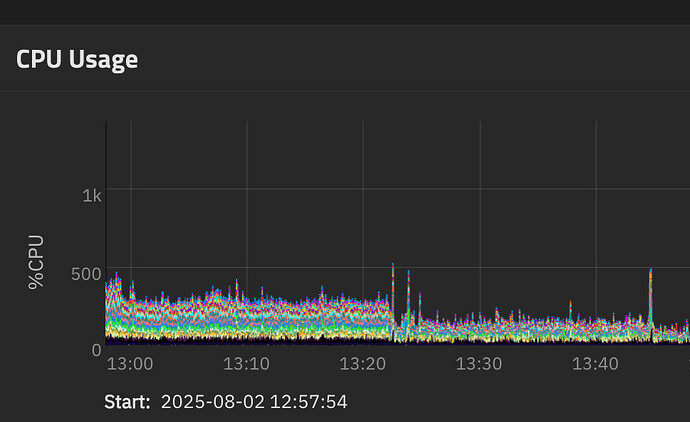

This was the image in question:

ghcr.io/open-webui/open-webui:main.

This app was running but for some reason TrueNAS was trying over and over to connect to GitHub container registry and failing. After stopping this container, cleaning up all image unused images and starting it again it’s fine and the CPU is back to normal.

Looks to me as if the check for the container image fails, it doesn’t fail gracefully and backoff and retry. I don’t have registry credentials set up for ghcr but I don’t think that would apply to this manifest API call.

After all of this was settled (still not sure what actually fixed it unless GitHub was throwing errors for making too many calls) I was able to update to 25.04.2 again and all is fine.

Anyway, sorry to post on this thread, hope this helps someone.

EDIT: It’s happening again with other ghcr images so might be a bug in the aiohttp call that is throwing. I turned off update checks for now.