Updated: 21 September 2025 - Multi-Report v3.20, and Drive-Selftest v1.06

Multi-Report is script which was originally designed to monitor key Hard Drive and Solid State Drive data and generate a nice email with a chart to depict the drives and their SMART data. And of course to sound the warning when something is worth noting.

Features:

- Seagate Drive SCAM test

- Easy To Run (Depends on your level on knowledge)

- Very Customizable

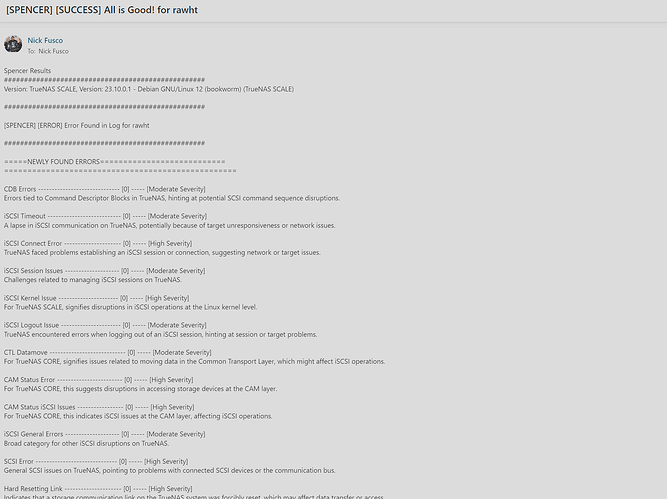

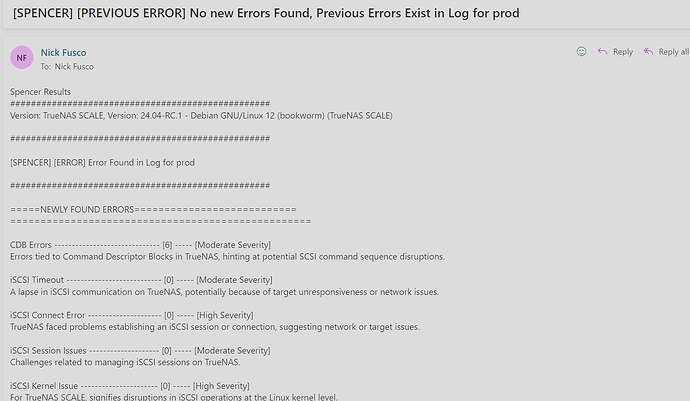

- Sends an Email clearly defining the status of your system

- Runs SMART Self-tests on NVMe (CORE/SCALE cannot do this as of this writing)

- Online Updates (Manual or Automatic)

- Has Human Support (when I am not living my life)

- Saves Statistical Data in CSV file, can be used with common spreadsheets

- Sets NVMe to Low Power in CORE (SCALE does this automatically)

- Set TLER

- And many other smaller things

SMART was designed to attempt to provide up to 24 hours to warn a user of pending doom. It is very difficult to predict a failure however short of a few things, SMART works pretty well. Up to 24 hours, that means when you find out about a problem, that failure could happen at any moment. Just heed the warning.

Change from previous versions

Multi-Report no longer performs SMART tests directly. It now calls companion file drive_selftest.sh to run the SMART tests. This was done as a precursor to TrueNAS SCALE finally including NVMe SMART test scheduling. This also includes NVMe ONLY testing as a option should you desire it.

Another key feature is sending your email a copy of the TrueNAS configuration file weekly (the default). How many times have you seen someone lose that darn configuration file?

There is an Automatic Update feature that by default will notify you an update to the script exists however Automatic Updates is disabled (default). You can change this setting to allow fully automated updates if you desire.

I have built in troubleshooting help which if you specifically command, you can send me (joeschmuck) an email that contains your drive(s) SMART data and other Multi-Report data. I can then figure out if you need to make a small configuration change or I need to fix the script.

All the files are here on GitHub. I retain a few previous versions in case someone wants to roll back. The files are all dated. Grab the multi_report_vXXXX.txt script and the Multi_Report_User_Guide.pdf, that should get you started.

There is a nice thread on the old TrueNAS Forums Here that is a good history.

Download the Script, take it for a spin. Sorry, this forum does not allow uploading of PDF files, so grab the user guide either from GitHub or run the script using the -update switch and it will grab the most current files.

When you do run this script, after you have performed the configuration which can be very minimal, it will download the companion script called Drive-Selftest. I separated the one Multi-Report script into two pieces as it allows easier maintaining. I also expect TrueNAS GUI to start supporting NVMe testing, sooner than later.

Multi-Report Version 3.20 is just bit of grooming, making it all look pretty. Seagate drives will now display the SMART drive data for ID1 and ID7 to only show actual error counts.

An alternative to Multi-Report is FreeNAS-Report, also found in this resource section. Both are based off similar code (and others who modified the script over the years, names are at the top of the script) and it is freeware to share.

Note: Multi-Report Defaults are setup for the script to be run once a day. If you choose to run less frequently, a few changes should be made.

Note: If you have problems running the script due to lack of privileges, read the User Guide on the Initial Setup.